The ai agent vs chatbot distinction matters because both systems answer questions and both run on artificial intelligence, yet they operate on different architectures and produce different business outcomes.

A chatbot responds to user inputs within a defined conversational scope. An AI agent receives a goal, designs its own workflow, and executes multi-step tasks on its own without further direction.

Choosing between them shapes whether your deployment handles routine queries or drives strategic outcomes. The wrong choice produces frustrated users, wasted budget, or both.

What Is a Chatbot?

A chatbot is a software program that responds to user questions through a conversational interface. The term covers two architecturally distinct systems: rule-based chatbots and generative AI chatbots.

Both share the same surface behavior of accepting text input and returning a response. Underneath, they differ in nearly every meaningful way.

How They Work and Their Architecture

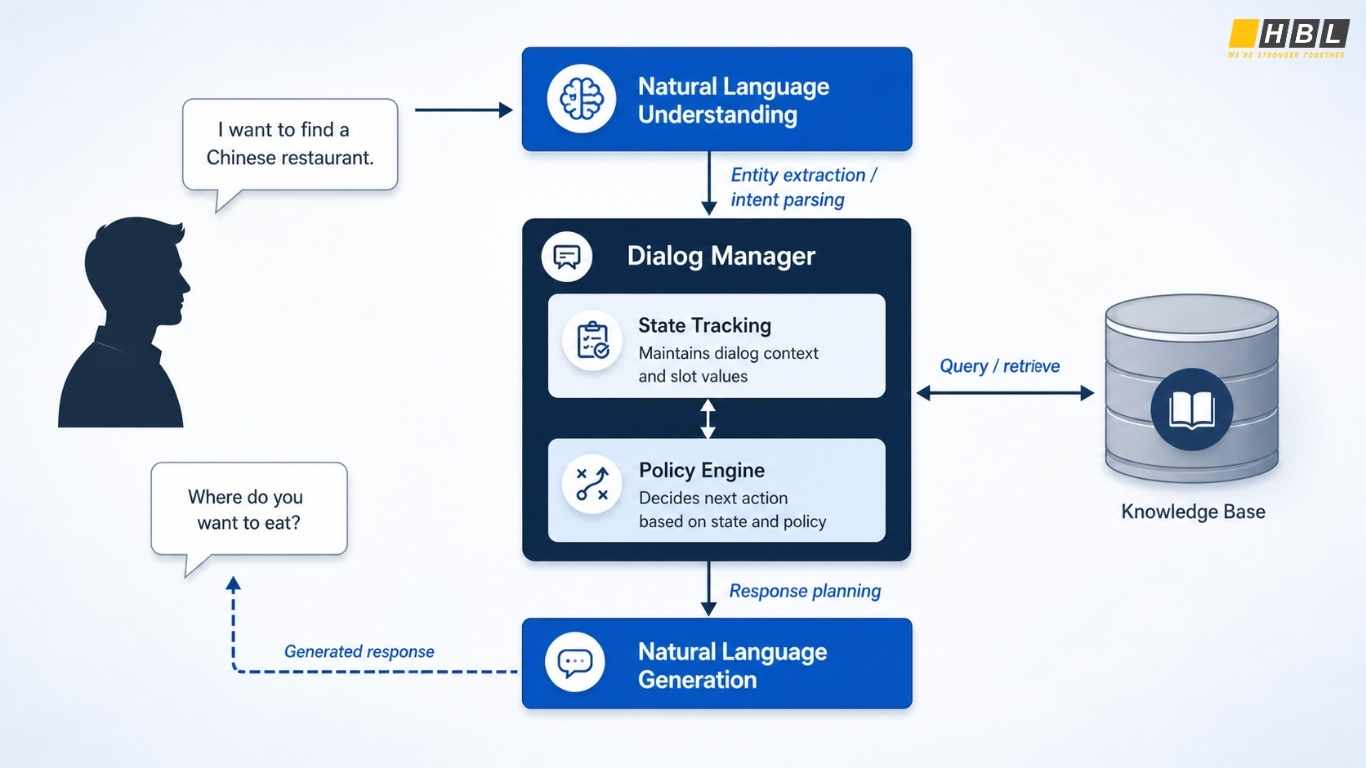

Chatbot architecture rests on three connected components:

- User interface (UI): where the human enters input

- Natural language processing (NLP) engine: parses the input

- Response generation component: where rule-based and generative chatbots diverge

In a rule-based system, the third component is a rules engine that applies preset logic. In a generative system, that component is replaced by a large language model (LLM) trained on massive text datasets.

The substitution looks small in a diagram, yet it changes how the system behaves at every level.

Rule-Based Chatbots

Rule-based chatbots follow if/then logic. The system scans user input for specific keywords, matches them against entities defined by developers, and returns a corresponding response from a fixed set of options.

Some simpler implementations skip full NLP and rely on keyword detection alone.

Consider a shopper asking, “Do you have the blue running shoes in size 9?” The chatbot detects the entities “running shoes,” “blue,” and “size 9,” matches them to inventory data, and returns a predefined response such as “Yes, the blue running shoes in size 9 are in stock at our downtown location.”

The interaction works because the question is anticipated, the entities are recognized, and the answer exists in the rules database.

Generative AI Chatbots

Generative AI chatbots replace the rules engine with an LLM. These models are trained on billions of words and use deep learning, neural networks, and NLP to interpret context and produce responses.

The chatbot generates a response on the fly rather than picking one from a list.

This produces two practical advantages over rule-based systems:

- Linguistic flexibility: generative chatbots handle complex linguistic structures and ambiguous phrasing that rule-based systems cannot parse

- Adaptive learning: they improve over time as their underlying models are retrained

A rule-based system that has not been updated since deployment behaves identically on day 500 as it did on day one.

Rule-Based vs. Generative Chatbots

| Dimension | Rule-Based Chatbot | Generative AI Chatbot |

|---|---|---|

| Architecture | UI + NLP engine + rules engine | UI + NLP engine + LLM |

| Response mechanism | Selects from predefined responses | Generates novel responses |

| Adaptability | Static; requires manual updates | Improves through retraining |

| Use case fit | Predictable, repetitive queries | Open-ended, creative tasks |

| Hallucination risk | None; bounded by rule set | Present; outputs may be ungrounded |

Limitations and Risks

Rule-based chatbots fail outside their scope. Any query the developer did not anticipate produces either a wrong answer or a routing message asking the user to rephrase. The system cannot reason about novel inputs.

Generative chatbots carry the opposite risk: hallucination. The LLM may produce fluent, confident responses that are ungrounded in fact.

The same flexibility that lets the model handle unfamiliar questions also lets it fabricate plausible nonsense.

For high-stakes applications such as medical guidance or financial advice, this is a defining constraint that determines whether the system can be deployed at all.

When to Use a Chatbot

A chatbot is the correct choice when the conversational scope is narrow and the cost of wrong answers is low. The question of when to use chatbot vs generative ai support agent depends on three variables:

- Query predictability

- User expectation

- Tolerance for error

Predictable queries with low error tolerance favor rule-based systems. Predictable queries with high error tolerance and demand for natural conversation favor generative chatbots. Anything beyond that range probably needs an assistant or an agent.

Practical Use Cases

Rule-based chatbots fit shipping inquiries, order status checks, store hours, return policies, and other frequently asked questions where the answer is fixed and the entities are clearly defined.

They are inexpensive to operate, predictable in output, and immune to hallucination because they cannot say anything outside their script.

Generative AI chatbots fit creative tasks, brainstorming, drafting, and any interaction where the user expects natural conversation rather than menu navigation.

They suit internal employee tools where occasional inaccuracy is acceptable and consumer applications where conversational fluency drives engagement. An ai powered chatbot platform built on an LLM offers flexibility that no rules engine can match, at the cost of unpredictable failure modes.

Our Case Study: AI Chatbot for a Life Insurance Company

Client

A life insurance company seeking to improve customer communication, reduce manual support workload, and create a smoother path from initial inquiry to sales consultation.

Business Challenge

Life insurance products can be difficult for customers to understand because they often involve multiple plans, conditions, procedures, and required documents.

Customers may ask questions such as:

What plan should I choose?

What documents do I need?

How does the claim process work?

Can I change my policy later?

When should I speak with a consultant?

Before adopting AI, the client relied heavily on support teams and sales representatives to answer repetitive inquiries. This created several challenges:

- Long response time during busy periods

- Inconsistent answer quality depending on the staff member

- High workload for sales and support teams

- Difficulty guiding customers step by step through complex insurance information

- Missed opportunities to connect interested users with the right sales representative

Solution

HBLAB supported the introduction of an AI chatbot powered by Natural Language Processing, designed to understand user questions and guide customers through insurance-related inquiries in a clear, step-by-step manner.

Instead of forcing users to follow fixed menu flows, the chatbot can interpret natural language input and identify the user’s intent. It then provides relevant guidance based on the inquiry context.

When the question requires deeper consultation, the chatbot encourages users to connect with a sales representative, helping bridge the gap between digital self-service and human advisory support.

Key Features

- Natural language understanding for customer inquiries

- Step-by-step guidance for insurance products, procedures, and required documents

- Automated responses for frequently asked questions

- Intent detection to understand whether the user needs information, comparison, procedure support, or sales consultation

- Smooth handoff to sales representatives when human support is needed

- Support for customer education before direct consultation

- Improved lead qualification by identifying users with clear interest or needs

Business Value

The chatbot helps the insurance company improve both operational efficiency and customer experience.

Key expected outcomes include:

- Faster response to customer inquiries

- Reduced workload for support and sales teams

- More consistent communication quality

- Better customer understanding of insurance products and procedures

- Stronger connection between online inquiries and sales follow-up

- Improved lead nurturing before consultation

- Scalable customer support without increasing human resources at the same pace

Read the in-depth analysis of Agentic AI

Why Generative AI Chatbots Matter

Compared with traditional rule-based chatbots, generative AI chatbots offer greater flexibility.

A rule-based chatbot can only respond based on predefined flows and fixed answer patterns. This works well for simple FAQs but often fails when users ask long, unclear, or unexpected questions.

A generative AI chatbot can understand more complex language and generate natural responses in real time. This makes it more suitable for insurance scenarios where customers may describe their situation in different ways.

For example, instead of selecting from a menu, a user can ask:

“I am considering life insurance for my family, but I am not sure what information I need to prepare first.”

A generative AI chatbot can understand the context and provide a more helpful, conversational response.

Limitations to Consider

Despite its flexibility, a generative AI chatbot also has important limitations, especially in high-stakes industries such as insurance, healthcare, and finance.

Again, the biggest risk is hallucination.

This means the AI may generate answers that sound natural and confident but are not fully accurate or not based on the company’s official information.

For an insurance company, this can create serious risks, such as:

- Providing incorrect policy explanations

- Giving outdated information about procedures or documents

- Misstating terms, conditions, or eligibility

- Creating confusion before sales consultation

- Increasing compliance and trust risks

Because insurance decisions can directly affect customers’ financial planning and protection, AI responses must be grounded in verified, approved information.

How HBLAB’s M-RAG Helps Solve This Challenge

To address the hallucination risk, HBLAB provides M-RAG, an enterprise-ready knowledge retrieval solution designed for accurate and reliable AI responses.

M-RAG enables the chatbot to generate answers based strictly on the company’s verified source documents, such as:

- Product brochures

- Policy documents

- Internal manuals

- FAQ databases

- Sales guidelines

- Claim procedure documents

- Compliance-approved materials

Instead of relying only on the language model’s general knowledge, M-RAG retrieves relevant information from trusted documents before generating a response.

This helps the chatbot:

- Reduce hallucinations

- Provide answers with stronger factual grounding

- Improve consistency across customer interactions

- Handle complex questions more accurately

- Support traceable and evidence-based responses

- Maintain higher reliability in enterprise environments

M-RAG is especially valuable for life insurance companies because it combines the conversational flexibility of generative AI with the reliability required for customer-facing and internal advisory workflows.

What Is an AI Assistant?

An AI assistant is a generative-AI-powered system that interprets natural language input, personalizes responses, and executes backend tasks. It uses NLP, natural language understanding (NLU), and machine learning to understand open-ended queries without forcing the user into predefined menus.

AI assistants remember conversation history and apply context across turns within a session, sometimes across sessions.

The defining capability is task execution. A traditional chatbot tells the user how to update their account. An AI assistant updates the account.

It can send emails, modify records, retrieve data from connected systems, and complete operations that previously required a human agent.

Apple’s Siri, Amazon Alexa, and ChatGPT are common examples, though their capabilities vary widely depending on the integrations available to each implementation.

Chatbots vs. AI Assistants

The gap between a chatbot and an AI assistant is the difference between menu navigation and natural conversation.

A traditional chatbot forces users into preexisting categories: FAQ, order inquiry, other. When the user’s actual question does not match any category, the system fails and routes to a human. This produces frustration and provides no productivity gain over a static help page.

An AI assistant accepts the user’s question in their own words. It understands intent across phrasings, retrieves relevant information, and may take action on the user’s behalf.

The personalization layer adds further capability. The assistant can greet returning users by name, recall prior interactions, and tailor responses to user history. The result is a system that resolves cases the chatbot would have escalated, freeing human agents to handle genuinely complex problems.

The architectural distinction is simple:

- Chatbots describe the entire category, including rule-based systems

- AI assistants are the generative subset with NLU, memory, and task execution

All AI assistants are chatbots in the broad sense, while the reverse is not true.

What Is an AI Agent?

An AI agent is an autonomous system that receives a goal and pursues it through self-directed action. Built on an LLM like an AI assistant, the agent extends the architecture with three capabilities:

- Tool use

- Persistent memory across sessions

- Workflow design

Given a single initial prompt, the agent decomposes the goal into subtasks, accesses external data sources and APIs, and executes the steps needed to achieve the objective.

The behavioral difference is autonomy. An AI assistant waits for the next user prompt after every response. An AI agent continues working toward the goal without further direction.

A prompt such as “optimize our sales strategy” triggers an agent to gather data, run analyses, draw conclusions, and execute changes through connected tools. The user does not specify the steps; the agent does.

Agents also accumulate experience. Persistent memory means decisions made in one session inform behavior in the next. An agent that learned a particular tool produces unreliable outputs will avoid it on future tasks without being told.

AI Assistants vs. AI Agents

Reactive vs. Autonomous

The clearest line between assistants and agents runs through autonomy.

AI assistants are reactive systems. They respond to each prompt and wait for the next one. The interaction follows a back-and-forth rhythm of prompt and response, with the user driving every turn.

Improvements come through prompt tuning and fine-tuning the underlying model on task-specific examples.

AI agents are proactive systems. A single goal-oriented prompt initiates a sequence of independent actions. The agent designs its own workflow, calls external tools, queries data sources, and revises its approach based on intermediate results.

Both architectures share the LLM foundation. The agent layer adds reasoning, planning, and execution capabilities that the assistant does not possess.

Use cases reflect this divide:

- Assistants handle customer service, virtual help desks, and code generation, where each task is bounded and a human reviews the output.

- Agents take on automated trading, network monitoring, and sales strategy optimization, where the system must analyze large datasets, make decisions, and act in real time.

The question of what is an ai agent vs chatbot resolves cleanly at this layer:

- Chatbots return responses to questions

- Assistants understand intent and complete tasks on behalf of users

- Agents pursue stated goals through self-directed multi-step workflows

Limitations and Risks

Both assistants and agents share two structural weaknesses. They are brittle.

Small changes in prompt phrasing can produce different outputs, and adversarial inputs can derail expected behavior. Their outputs require human verification, especially in domains where errors carry material consequences.

Agents introduce additional risks beyond those of assistants. Because they run extended workflows without supervision, they can enter feedback loops where flawed reasoning compounds across steps.

Computational costs are also higher, since each agent task may involve many LLM calls, tool invocations, and data retrievals.

Without proper monitoring, an unsupervised agent can consume significant resources or produce cascading errors before anyone notices.

The Continuum Explained

From Chatbot to Agent

The progression from rule-based chatbot to autonomous agent describes increasing levels of capability and autonomy along a single continuum.

At the bottom sit rule-based chatbots, which match keywords to predefined responses and cannot operate outside their script.

Above them sit generative chatbots, which use LLMs to produce flexible responses while remaining reactive within a single turn.

AI assistants add NLU, memory, personalization, and task execution. They understand intent across phrasings and complete operations on the user’s behalf, while still waiting for each new prompt.

AI agents sit at the top, adding goal-directed planning, tool use, and persistent memory across sessions.

Each tier inherits the capabilities below it and adds further autonomy.

A sufficiently capable AI assistant with tool access starts to resemble an agent. A constrained agent operating within tight guardrails behaves like an assistant.

The continuum matters because product decisions rarely require pure forms. They require knowing which capability level matches the operational requirement.

How to Choose the Right Tool

The ai agent vs chatbot decision reduces to four questions about the task. Apply this framework to your specific use case:

Is the query space predictable and narrow? If yes, a rule-based chatbot is sufficient and most cost-effective. Examples: shipping FAQs, store hours, basic policy lookups.

Does the user need natural conversation but not task execution? If yes, a generative chatbot or ai powered chatbot platform fits. Examples: brainstorming tools, content drafting, conversational search interfaces.

Does the system need to understand open-ended queries and execute backend operations? If yes, deploy an AI assistant. Examples: customer service that updates accounts, IT helpdesk that resets passwords, HR tools that submit time-off requests.

Does the task involve multi-step workflows, tool use, and decisions made without human prompting at each step? If yes, an AI agent is the right choice. Examples: automated trading systems, autonomous research workflows, network anomaly response.

Two practical constraints sit alongside this framework:

- Cost scales with capability. Rule-based chatbots are cheapest; agents are most expensive per interaction.

- Risk tolerance scales inversely. Rule-based systems fail predictably, while agents fail in ways that are harder to anticipate and can cascade across steps.

Match the system to the lowest tier that can complete the task reliably, then add capability only when the use case demands it.

Over-engineering the AI layer is among the most common and most expensive mistakes in enterprise deployment.

Where These Technologies Are Heading

The four types of conversational AI are evolving on parallel tracks rather than replacing one another. Each tier is gaining new capabilities, drawing different industries, and settling into distinct roles inside enterprise stacks. The 2025 and 2026 picture shows specialization, not consolidation.

Rule-Based Chatbots: Still Dominant in Compliance-Heavy Workflows

Rule-based systems remain the default for any interaction where every possible response must be auditable in advance. Gartner’s 2025 customer service research continues to flag rule-based deflection as the most cost-efficient first-line option for high-volume predictable queries.

Industries leaning hardest on rule-based chatbots in 2025 and 2026:

- Banking and insurance for compliance flows, KYC questions, and policy lookups

- Healthcare front-desk systems for appointment scheduling and intake forms where regulatory risk blocks generative output

- Government and utilities for fee schedules, outage reports, and benefit eligibility checks

The dominant pattern now is hybrid routing. Enterprises place rule-based logic at the front of the funnel to handle predictable queries with zero hallucination risk, then escalate ambiguous cases to a generative layer behind it.

Generative Chatbots: Shifting Toward Retrieval and Smaller Models

Generative chatbots are moving away from large general-purpose models toward two architectural shifts.

The first is retrieval-augmented generation (RAG), which grounds responses in a verified knowledge base to reduce hallucination.

The second is the rise of smaller, fine-tuned models such as Llama 3, Mistral, and domain-specific variants that lower compute cost and latency.

Voice has become the major new frontier. Low-latency speech models from OpenAI, ElevenLabs, and Google have pushed voice-first generative chatbots into call centers, in-car assistants, and consumer devices throughout 2025.

Industry preferences worth noting:

- E-commerce is adopting conversational shopping assistants for product discovery and post-purchase support

- Marketing teams are using generative chatbots embedded in content management systems for drafting and ideation

- Internal knowledge bases at large enterprises are being rebuilt around generative search interfaces, replacing traditional intranet lookups

AI Assistants: The Workplace Productivity Layer

AI assistants are the fastest-growing tier in enterprise spending. Microsoft 365 Copilot, Google Gemini for Workspace, and Salesforce Einstein have moved from pilot programs to broad rollouts across mid-market and enterprise accounts through 2025.

Verticalized assistants now dominate specific professional workflows:

- Coding: GitHub Copilot, Cursor, and Claude Code have become standard developer tooling

- Healthcare: Abridge and Nabla handle clinical documentation, generating notes from patient conversations

- Legal: Harvey and Hebbia are widely used for contract review and case research

- Customer service: Klarna publicly reported its AI assistant handles roughly two-thirds of customer service chats, work equivalent to about 700 human agents

The defining capability shift is function calling and tool integration. Modern assistants no longer just talk; they read calendars, draft emails, query databases, and update CRM records inside the same conversation.

AI Agents: The Defining Frontier of 2025 and 2026

Industry leaders at OpenAI, Anthropic, Google, and Microsoft have publicly framed 2025 as the year agents moved from research into production. The launches that defined this shift include OpenAI’s Operator for computer-use tasks, Anthropic’s Computer Use capability, Salesforce Agentforce, and Microsoft Copilot Studio for custom agent deployment.

Gartner forecasts that by 2028, 33% of enterprise software applications will include agentic AI, compared to less than 1% in 2024. The trajectory through 2025 and 2026 supports that direction, though deployment remains uneven across industries.

Where agents are gaining real ground:

- Software engineering is the most mature agent vertical, with coding agents like Devin, Cursor, and Claude Code handling end-to-end tasks under developer review

- Financial services are deploying agents for compliance monitoring, fraud investigation, and trading research workflows

- Customer operations at large retailers and SaaS companies are running agents for ticket triage, refund processing, and account reconciliation

- Research and analysis workflows are being automated through deep-research agents that synthesize sources across hours of work

Where agents remain cautious:

- Healthcare and legal practice continue to resist autonomous agent deployment because of liability and verification requirements

- Regulated finance uses agents for back-office work but keeps customer-facing decisions under human control

The market preference settling in through 2026 is supervised autonomy. Buyers want agents that act independently within bounded workflows while reporting actions to human reviewers, rather than fully unsupervised systems.

>> Human-in-the-Loop Checkpoints (Agentic AI Orchestration)

What Buyers Are Actually Choosing

The enterprise stack emerging in 2026 looks layered rather than singular:

- Rule-based chatbots at the entry point for compliance and high-volume FAQs

- Generative chatbots with RAG for natural conversation on grounded knowledge

- AI assistants embedded in productivity suites and vertical workflows

- AI agents for multi-step automation under human oversight

The companies winning ROI from conversational AI are matching each tier to the workload it handles best, with clear handoff rules between layers.

Frequently Asked Questions

1. What is the main difference between an AI agent and a chatbot?

A chatbot responds to user inputs within a defined conversational scope, returning answers one query at a time. An AI agent receives a goal, plans the steps required to achieve it, and executes those steps autonomously using external tools and data sources. The chatbot reacts; the agent acts.

2. Is ChatGPT a chatbot, an AI assistant, or an AI agent?

ChatGPT is most accurately classified as an AI assistant. It uses an LLM, understands natural language, maintains conversation memory within a session, and can execute tasks through plugins or tool integrations. Without those tools, it operates as a generative chatbot. With agent-style scaffolding such as function calling and autonomous workflows, it can behave as an agent.

3. Are all chatbots powered by AI?

No. Rule-based chatbots run on if/then logic and keyword matching with no machine learning involved. Only generative chatbots and AI assistants use AI in the form of large language models, NLP, and NLU.

4. Which is more expensive to deploy: a chatbot or an AI agent?

AI agents cost significantly more per interaction. A single agent task may trigger multiple LLM calls, tool invocations, and data retrievals, while a rule-based chatbot runs on simple logic with near-zero compute cost per query. Generative chatbots and assistants sit between these extremes.

5. Can an AI agent replace human employees?

AI agents can automate well-defined, multi-step tasks such as data analysis, monitoring, and routine workflow execution. They cannot replace roles requiring judgment under ambiguity, emotional intelligence, or accountability for high-stakes decisions. Most production deployments use agents to augment human work rather than replace it.

6. What is hallucination in AI chatbots, and why does it matter?

Hallucination occurs when an LLM generates fluent, confident responses that are factually incorrect or fabricated. It matters because the output looks identical to a correct response, making errors hard to detect. For regulated industries such as healthcare, finance, and legal services, hallucination risk is often the deciding factor against deploying generative systems for customer-facing tasks.

7. When should I use a rule-based chatbot instead of a generative AI chatbot?

Use a rule-based chatbot when:

- The query space is narrow and predictable

- Wrong answers carry meaningful business or legal risk

- Budget constraints favor low operating cost

- Auditability is required (every possible response must be reviewable in advance)

8. Do AI assistants and AI agents use the same underlying technology?

Both are built on large language models, so the foundational technology is the same. The difference is the layer built on top. AI assistants add NLU, memory, and task execution. AI agents add reasoning, planning, tool orchestration, and persistent cross-session memory. An agent is essentially an assistant with the ability to act on its own across multiple steps.

9. How do I know if my use case needs an AI agent rather than an AI assistant?

You need an AI agent if the task requires the system to make decisions and take actions across multiple steps without a human prompting each one. If a human can review and approve every output before action is taken, an assistant is sufficient. If waiting for human input at each step would defeat the purpose, such as in real-time trading or 24/7 network monitoring, an agent is required.

10. What are the biggest risks of deploying an AI agent?

The three primary risks are:

- Feedback loops, where flawed reasoning compounds across steps before anyone notices

- Cost overruns from unsupervised compute consumption

- Cascading errors, where one wrong decision triggers downstream actions that are difficult to reverse

These risks make monitoring, guardrails, and human-in-the-loop checkpoints essential for any production agent deployment.

11. Can chatbots, AI assistants, and AI agents work together in the same system?

Yes, and this hybrid model is becoming the dominant enterprise pattern. Routine queries route to rule-based chatbots, conversational interactions go to generative models, task-execution workflows run through AI assistants, and complex multi-step problems escalate to agents under human supervision. Each tier handles the workload it is best suited for.

12. How long does it take to deploy each type of system?

Rule-based chatbots can be deployed in days to a few weeks depending on the rule set. Generative chatbots and AI assistants typically take weeks to a few months, factoring in integrations, prompt engineering, and testing. AI agents require the longest implementation time, often several months, because of the planning, tool integration, monitoring infrastructure, and safety testing required before production use.

13. Will AI agents make chatbots obsolete?

No. Each model serves different needs and cost structures. Rule-based chatbots will remain the most cost-effective option for high-volume predictable queries. Generative chatbots will continue serving open-ended conversational use cases. The market is moving toward layered deployments rather than a single dominant technology.

Read more:

– Agentic AI Architecture: What Makes an AI System Agentic

– LLM Fine-Tuning: 6 Steps to Tune a Model and When to Use RAG Instead