Agentic AI orchestration is the engineering layer that coordinates autonomous software agents so they can pursue one business goal through many connected steps. An orchestrator receives a request, decides which agents should participate, manages what context each agent sees, routes tool calls into external systems, tracks state across the workflow, and inserts human review where risk or ambiguity is too high.

Enterprise agent systems rarely fail at text generation alone. They fail at routing, shared state, permissions, retries, observability, and recovery after exceptions.

When teams evaluate agentic AI orchestration, they are evaluating how well a system decomposes goals, delegates work, coordinates agents and tools, persists memory, governs access, and produces an auditable result under production conditions. Orchestration sits alongside memory, runtime, evaluation, monitoring, and governance rather than being treated as a narrow prompt design problem.

What Is Agentic AI Orchestration

Agentic AI orchestration is the coordination of autonomous AI agents, tools, memory, and decision checkpoints so a high-level goal can be executed as a managed workflow. The word agentic refers to systems that pursue goals with a meaningful degree of autonomy through reasoning, planning, action, and adaptation. The orchestration part is the control layer that decides how those capabilities get organized across the workflow.

Agentic AI orchestration begins when an orchestrator receives a broad request such as “onboard this new supplier” or “investigate this outage.” A coordinator or supervisor then decomposes that request into subtasks, selects specialized agents, routes each task to the right tool set or data source, tracks dependencies, and handles retries, escalations, or human approvals when needed. This layer is responsible for decomposition, routing, context management, tool invocation, and adaptive execution.

AI Agent Orchestration, Agent Orchestration, Agentic Orchestration

AI agent orchestration is the technical coordination of one or more AI agents inside a workflow. Agent orchestration is the broader category, because it can include AI agents, rules-based workers, robotic process automation bots, and other automated actors. Agentic orchestration is the most specific of the three terms, because it implies that the participating agents can reason, plan, adapt, and operate with some autonomy rather than follow only preset branches.

In enterprise marketing and product language, these terms are often used interchangeably. Technically, they do not mean the same thing.

AI orchestration is system-wide coordination of models, data pipelines, and application components. AI agent orchestration focuses specifically on autonomous agents. Agentic orchestration emphasizes governance, oversight, and long-running processes that combine agents, robots, and people.

If a vendor is coordinating model calls and APIs broadly, that is AI orchestration. If it is coordinating autonomous agents, that is AI agent orchestration. If those agents are goal-focused and adaptive, agentic orchestration is the more precise label.

What Is the Difference Between Multi-Agent Systems and Agentic AI Orchestration

A multi-agent system is the architecture. Agentic AI orchestration is the coordination mechanism applied to that architecture. A multi-agent system tells you that multiple autonomous agents exist and collaborate. Agentic AI orchestration tells you how their work is planned, routed, governed, observed, and completed.

That difference is easy to miss because the two ideas overlap so often in production systems. A multi-agent AI system segments a complex objective into discrete tasks executed by specialized agents, and can collaborate in either structured or decentralized ways. Once you add a deliberate coordinator, supervisor, or task ledger that decomposes goals, maintains context, and directs execution, you are no longer describing only the architecture. You are describing agentic AI orchestration operating on top of it.

A multi-agent system can exist without a central orchestrator. Decentralized orchestration models allow agents to communicate directly and reach consensus without one controlling entity.

Agentic AI orchestration, by contrast, normally implies a deliberate coordination layer, whether that layer is a fixed workflow engine, an LLM (large language model) based coordinator, a supervisor agent, or a hybrid of deterministic and model-driven control. This is why most enterprise architectures pair multi-agent structures with explicit orchestration for auditability, routing, security, and recovery.

Key Components of an Agentic AI Orchestrated System

An agentic AI orchestrated system is not just a group of agents. It is a layered system in which each part has a specific job. The orchestrator manages flow, agents do specialized work, memory keeps context available, tools connect the system to real data and actions, protocols make communication consistent, human checkpoints reduce risk, and observability shows what happened during execution. When one of these layers is weak, the whole system becomes harder to trust, scale, or debug.

The Orchestrator

The orchestrator is the control layer of the system. It receives a goal, decides how to break that goal into smaller tasks, chooses which agent or tool should handle each task, routes work to the right place, and checks whether the workflow is moving toward the expected result.

In a simple system, this control can come from fixed rules. In a more adaptive system, the orchestrator often uses a large language model to reason about the request and make routing decisions at runtime. That is what makes the system feel agentic rather than scripted.

This component matters because most real workflows are not solved in one step. A customer service request may need classification, policy lookup, tool use, and a final response. A research workflow may need planning, evidence gathering, synthesis, and review. The orchestrator is the layer that keeps the process coherent. Without it, agents may work in isolation, repeat effort, or take actions in the wrong order.

Specialized Agents

Specialized agents are the workers inside the system. Each one is designed for a narrower job, such as retrieving data, validating documents, calling an API, writing a summary, or checking policy rules. In a well-designed system, each agent has a clear role, a limited scope, and a defined set of tools, making routing easier and behavior more predictable.

The main reason to separate agents is clarity. When one agent tries to do everything, prompts grow larger, context becomes noisy, and failures become harder to explain. When roles are separated, it is easier to test one part of the workflow at a time. It also becomes easier to replace or improve one agent without redesigning the whole system. That is one of the practical reasons multi-agent systems are attractive for enterprise work.

Memory Systems

Memory keeps the system from starting from zero on every step. Short-term memory holds the current session context, such as the conversation so far, recent tool outputs, and intermediate decisions inside the active workflow. It is stored in the agent state and persisted through checkpoints for the duration of an ongoing interaction.

Long-term memory stores information that should remain useful across sessions. This can include user preferences, stable facts, past decisions, or task history that the system may need later. Short-term memory helps the agent stay on track right now, while long-term memory helps it remember what matters later.

This distinction is important because memory is not the same as a knowledge base. A knowledge base is a source of reference information that the system queries when needed. Memory is about continuity. It stores what the system has learned or decided during interaction and helps carry that forward in a controlled way.

Tool and API Access Layer

Agents become operational only when they can do more than generate text. The tool and API layer gives them access to search, files, business systems, databases, code execution, and external actions. An API (application programming interface) is the standard way one software system sends requests to another. Without tools and APIs, an agent can describe what should happen, but it cannot reliably make that thing happen.

Built-in tools typically include web search, file search, memory, code interpreter, custom functions, and MCP servers. Agents can also orchestrate interactions across foundation models, software applications, and data sources using action groups for APIs and knowledge bases for retrieval. The value of an agent system comes largely from the systems it can reach and the actions it is allowed to perform.

Communication Protocols

As agent systems grow, they need standard ways to communicate with tools, data, and other agents. MCP (Model Context Protocol) is an open standard for connecting AI applications to external systems through secure, two-way connections. MCP is now hosted under the Linux Foundation, which reflects how quickly it has become part of the broader ecosystem.

A2A (Agent to Agent Protocol) focuses on communication between agents rather than tool connectivity. As of April 2026, A2A is recognized as a production-ready open standard with support from more than 150 organizations. A2A and MCP serve different roles: A2A helps agents coordinate with each other, while MCP helps them connect to tools and data sources.

Many production systems also need asynchronous event transport so work can move between services without every component waiting on a direct response. That is why teams often rely on messaging infrastructure such as AMQP (Advanced Message Queuing Protocol) and Kafka for decoupled event flow. These transport layers are often part of the same architecture because they move events, status changes, and tasks between components reliably.

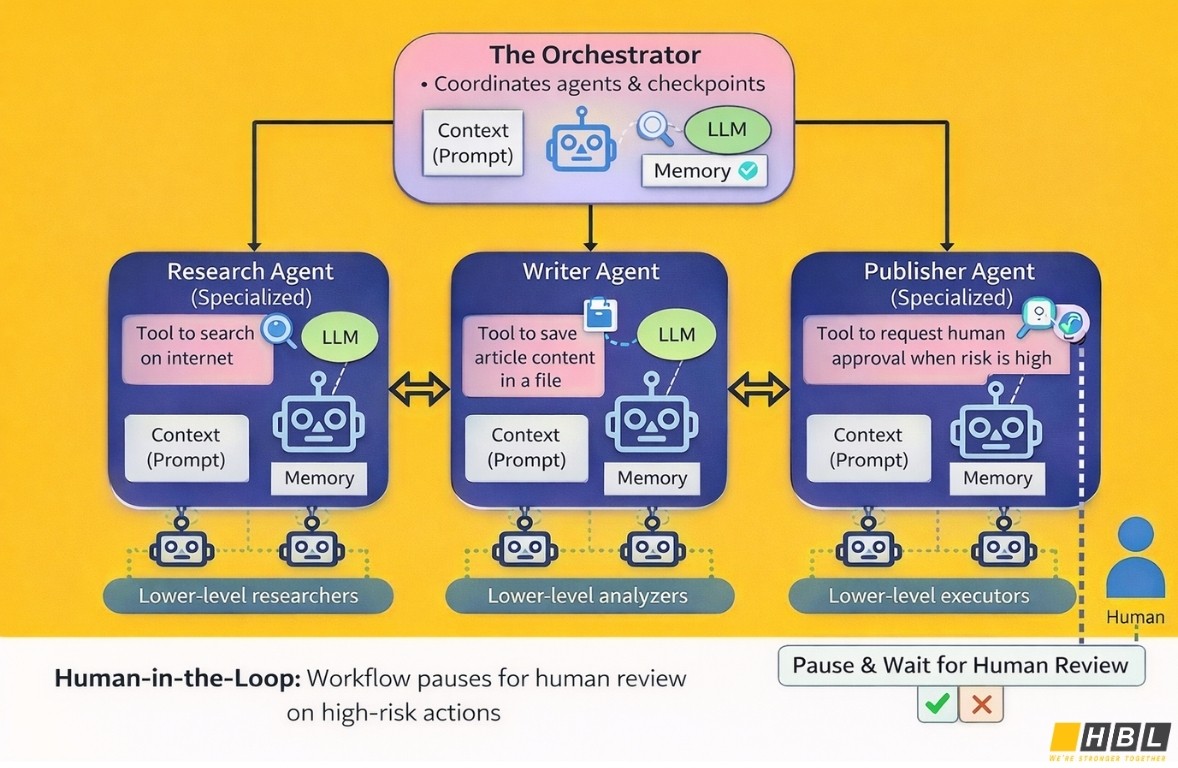

Human-in-the-Loop Checkpoints

Human-in-the-loop checkpoints are places where the workflow pauses and waits for a person to review, approve, reject, or correct an action. These checkpoints are critical when an automated decision could create legal, financial, operational, or reputational risk.

A tool can be marked so that approval is always required before execution. When that happens, the run does not continue to a final answer. It returns a request for human input and waits for the next step.

In practice, these checkpoints are useful for sensitive tool calls, write actions, payments, deletions, policy exceptions, or any decision that should not run without oversight. A human checkpoint is not only a safety layer. It is also a governance mechanism that defines where automation ends and accountable review begins.

Monitoring and Observability Layer

Observability is the layer that lets teams see what the system actually did. In agentic AI orchestration, that means tracing each step of execution, logging events and errors, measuring latency, tracking token usage and cost, and evaluating output quality.

Observability typically includes tracing, metrics, and Application Insights integration across model decisions and tool calls. Full observability provides trace visualizations, dashboards, session count, latency, duration, token usage, error rates, and OpenTelemetry compatible telemetry.

This layer becomes essential the moment a workflow has more than one model call or more than one agent. If a final answer is wrong, the team needs to know whether the problem came from retrieval, routing, tool failure, memory, permissions, or the model itself. Without observability, many agent failures look like random bad answers. With observability, they become visible system problems that can actually be fixed.

Core Orchestration Patterns

Agentic AI orchestration usually follows a small number of repeatable coordination patterns. The pattern decides how work is divided, when agents run, how results move between agents, and where control stays fixed or becomes adaptive. It also shapes the tradeoffs around speed, cost, visibility, and reliability. Orchestration is not only about connecting agents. It is about choosing the right control structure for the kind of work the system must do.

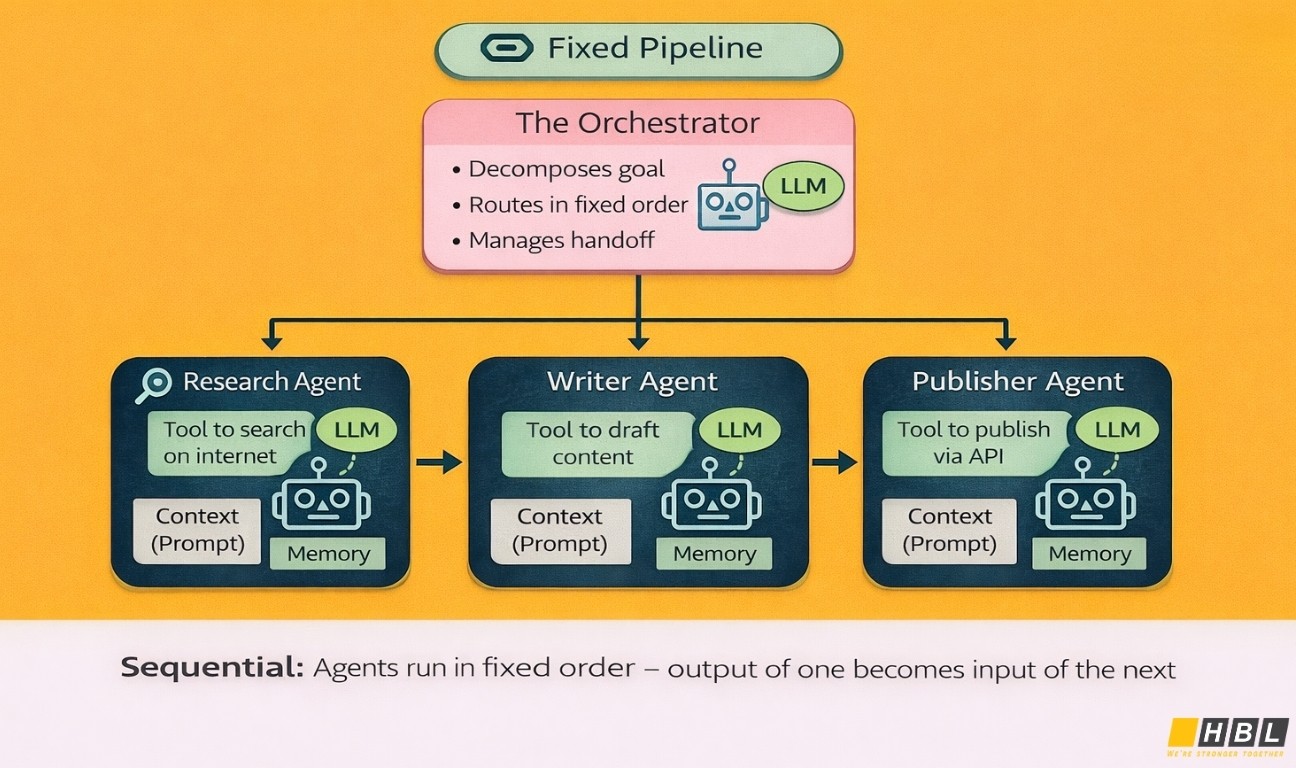

Sequential

A sequential pattern runs agents in a fixed order. One agent finishes its task, passes its output to the next agent, and the workflow continues step by step until the final result is ready. This is the simplest multi-agent structure to understand because it behaves like a pipeline.

This pattern is a strong fit for structured workflows such as document intake, extraction, validation, enrichment, and final formatting. It is also useful for review chains, where one agent drafts, another checks, and another polishes the output. Because the path is fixed, sequential orchestration is easier to test and easier to explain to non-technical stakeholders. It can also reduce cost and latency compared with a more dynamic orchestration model, because the system does not need an extra planner model to decide what happens next.

The weakness of sequential orchestration is rigidity. If the workflow needs to skip steps, branch based on new information, or recover by changing direction, a strict pipeline can become inefficient. When the early output is wrong or weak, the error can continue through the rest of the chain.

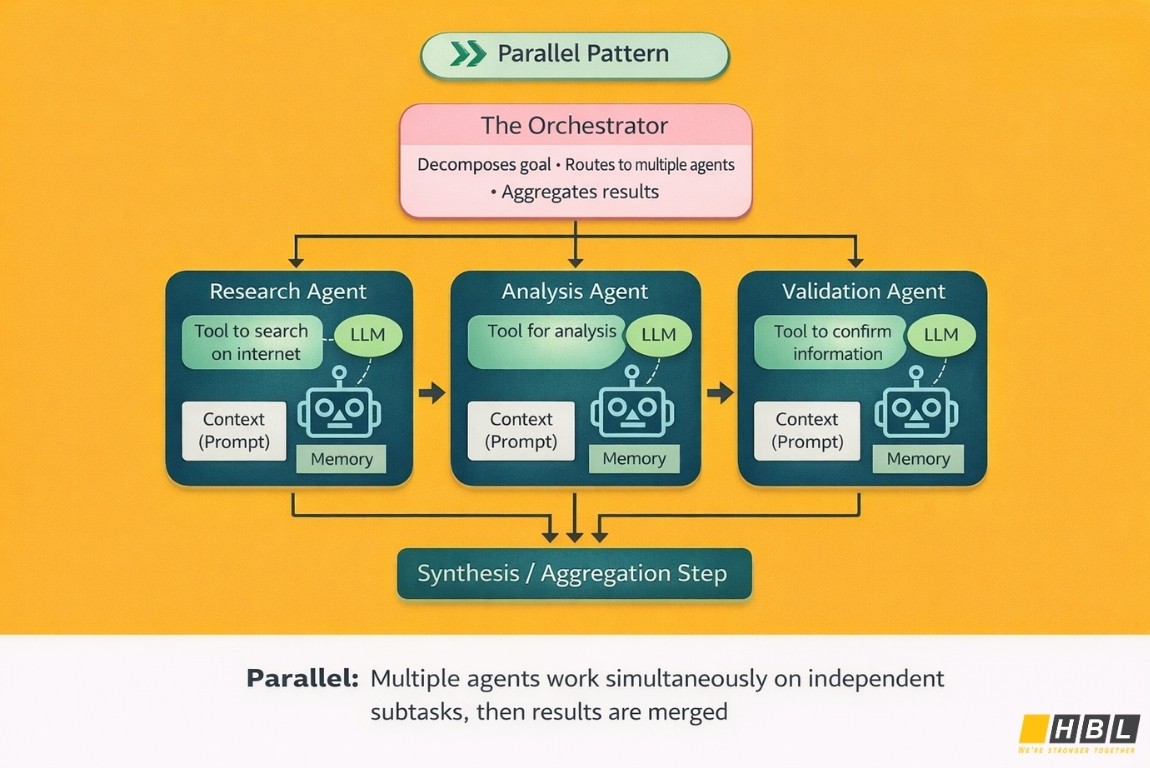

Parallel

A parallel pattern runs multiple agents at the same time. Each agent works on an independent subtask or looks at the same input from a different specialist angle. After that, the outputs are collected, compared, merged, or summarized into one final result.

This pattern is useful when speed matters or when the task benefits from multiple views of the same problem. Good examples include market research, risk assessment, evidence gathering, sentiment analysis, or financial analysis where different agents focus on different dimensions simultaneously. Parallel orchestration can reduce end-to-end response time and increases coverage because different agents can bring different tools, models, or knowledge sources into the same run.

The cost of parallel orchestration is not only financial. It uses more model calls, more tool calls, and more coordination at the end. The system also needs a clear aggregation strategy, whether that means voting, weighted ranking, or a final synthesis step. It is a poor fit when agents must build on each other’s work in a strict order.

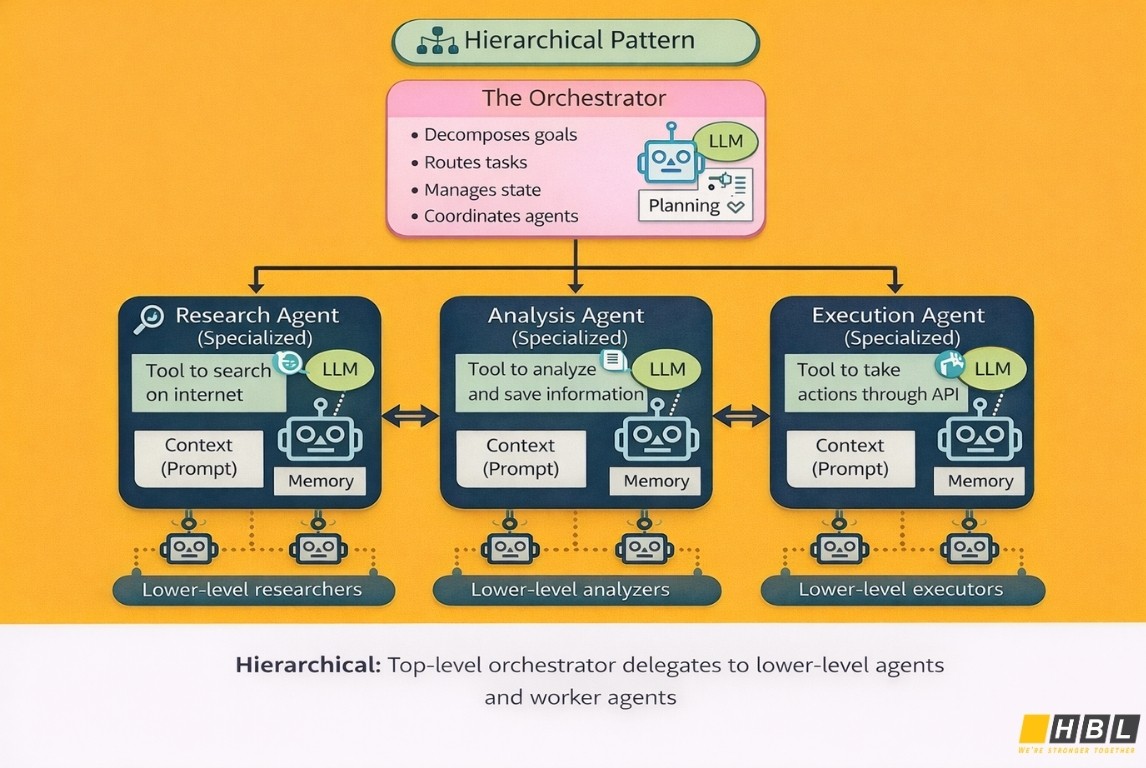

Hierarchical

A hierarchical pattern adds layers of delegation. A top-level orchestrator receives the goal, breaks it into smaller parts, and passes those parts to lower-level agents or sub-orchestrators. Those lower levels can break the work down again until each task is small enough for a worker agent to complete directly.

This structure is useful when the first request is too complex to solve in one pass. Examples include large research tasks, cross-department operations, enterprise planning, or workflows that combine several business functions. In these cases, a single planner can become overloaded. A hierarchical pattern spreads planning across levels, which makes the work more manageable and keeps specialist agents focused on narrower tasks.

The tradeoff is complexity. Hierarchical orchestration usually creates more model calls, more routing decisions, and more places where the system can slow down or fail. This is why hierarchical orchestration should be used when the added planning depth is clearly necessary, not by default.

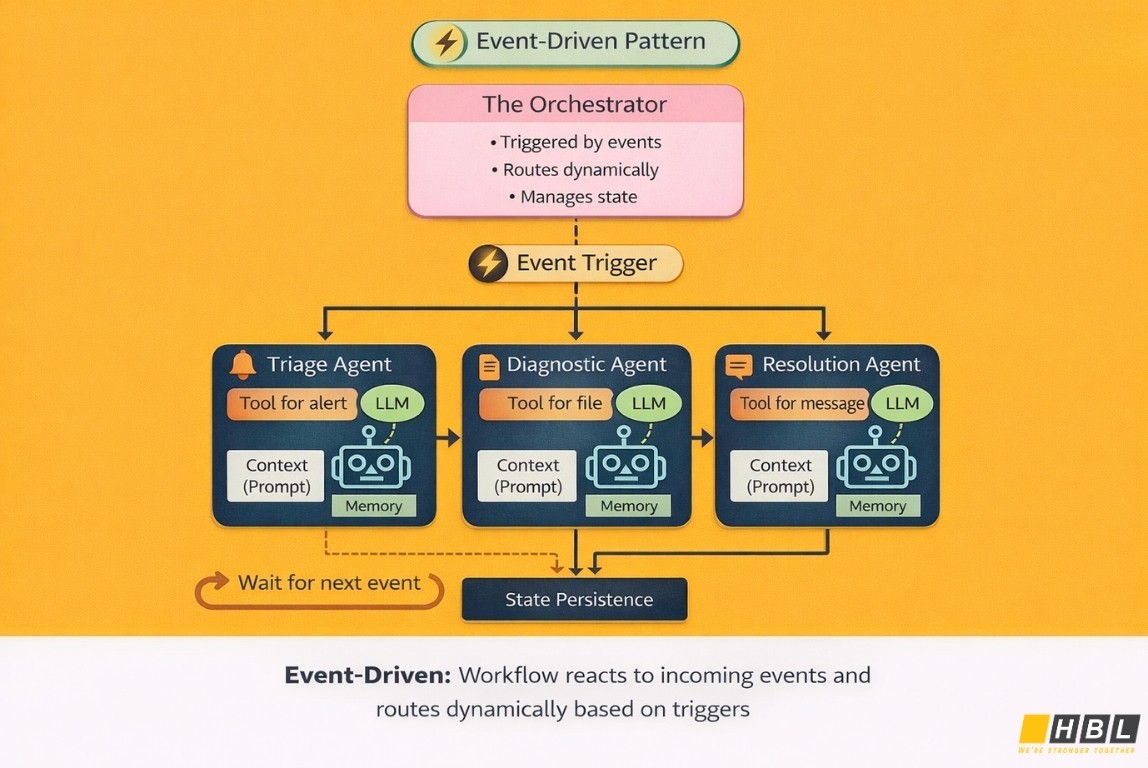

Event-Driven

An event-driven pattern starts work when something happens. That event might be a file upload, a customer message, a transaction, an alert, a sensor signal, or a state change in another system. Instead of following one static script from beginning to end, the workflow reacts to incoming events and routes work based on what has just occurred.

This pattern is a strong fit for real-time or long-running operations. Examples include fraud alerts, incident response, claims handling, customer support queues, telecom fault detection, or document workflows that start when a file arrives and branch again when new status signals appear.

The main benefit is flexibility. Event-driven orchestration can scale well and respond quickly to changing conditions. It also supports durable, long-running processes because the system can pause, wait for the next event, and continue later. The challenge is operational discipline. Teams need good state management, clear trigger definitions, observability, and guardrails so the workflow does not become hard to trace or accidentally trigger the wrong actions.

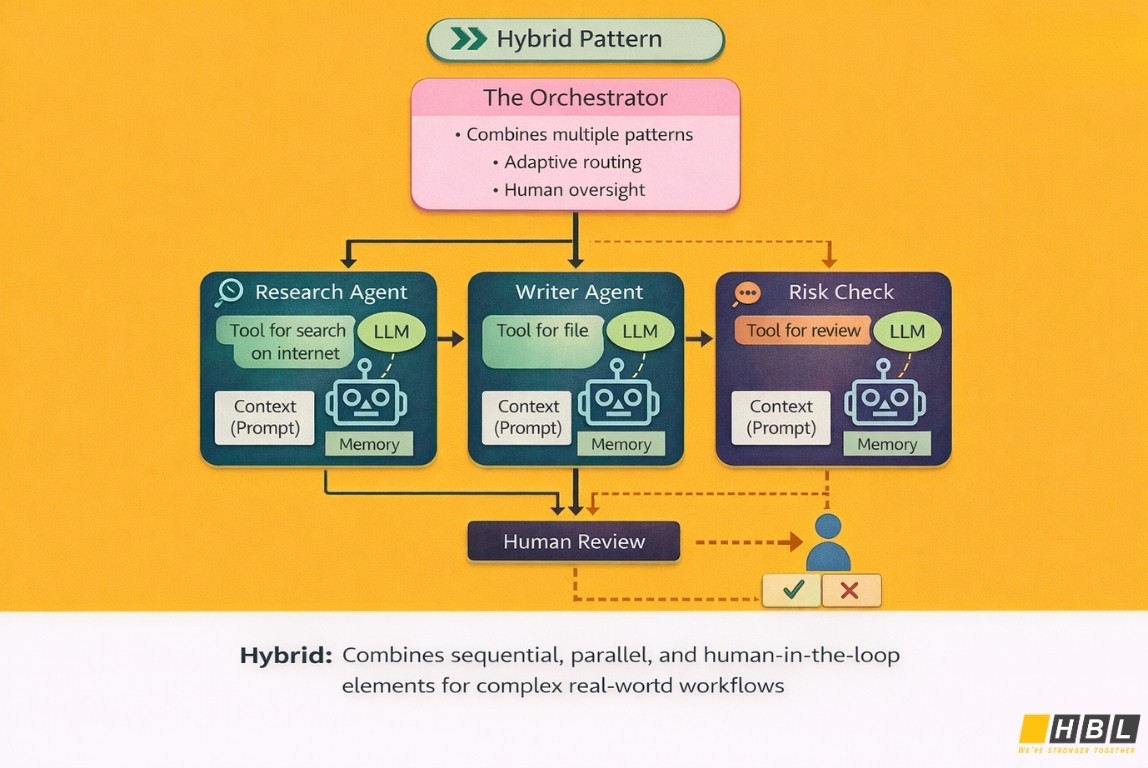

Hybrid

A hybrid pattern combines two or more orchestration patterns in one workflow. This is often the most realistic design for enterprise systems. One part of the workflow may be sequential because the steps are fixed. Another part may branch into parallel checks to reduce latency. A third part may escalate to a supervisor or pause for human review.

Hybrid orchestration becomes useful when a real business process contains different kinds of work. A refund process is a good example. Identity and eligibility checks may run in parallel. A decision step may then choose between different downstream paths. A final response may still need a fixed sequence for packaging and review.

The tradeoff is that hybrid systems are the hardest to design well. Each additional branch, trigger, or coordination mode increases testing and maintenance effort. Hybrid should not mean random complexity. It should mean deliberate combination, where each pattern is used only where it solves a real workflow need.

How to Implement Agentic AI Orchestration

Step 1: Define One Bounded, High-Value Use Case

The first version of an agentic AI orchestration system should solve one workflow, in one team, with one clear business goal. Do not start with a broad cross-department process. A good first use case has a visible pain point, repeated steps, available data, and a result that can be measured, such as faster turnaround time, lower manual effort, or fewer handoff errors.

Not every workflow needs an agentic system. If the work is fully predictable and always follows the same path, a normal rules-based workflow or simple automation may be enough. Agentic AI orchestration becomes more useful when the system needs to reason, choose tools, handle changing inputs, or break a larger goal into smaller tasks.

A practical way to test whether the use case is suitable is to ask four questions. Is the task open-ended enough that a fixed script will struggle? Does it need tool use, such as API calls, database access, or document retrieval? Does it involve more than one step or more than one specialist role? Does it benefit from judgment, adaptation, or routing? If the answer to most of these is no, a simpler architecture is usually better.

Step 2: Map the Workflow

Before writing code, map the full workflow on paper. Write down where the request starts, what information comes in, what output is expected, which systems the agents must access, where decisions are made, and what can go wrong. For each step, define who does the work, what context they need, what tool they may call, and what signal tells the system to move to the next step.

This workflow map should also include state handoffs, meaning what information one agent must pass to the next so the system does not lose context. It should also include failure paths. What happens if a tool times out, a document is missing, a model gives a weak answer, or a human reviewer rejects the result? When these paths are designed early, the system is much easier to debug later.

Step 3: Select the Orchestration Framework

Once the workflow is clear, choose the orchestration pattern. A sequential pattern is better for a fixed pipeline where order matters. A coordinator or supervisor pattern is better when one central agent needs to break a request into subtasks and assign them to specialists. A hierarchical pattern is better when work must be delegated through several layers. A human-in-the-loop pattern is better when the system must pause for approval before continuing.

Then choose the framework or platform that fits that pattern. If the team wants tight control over routing, state, and execution details, a code-first framework is usually the better fit. If the team wants a managed runtime with built-in tools, tracing, evaluation, and security controls, a cloud platform can remove a large amount of setup work.

This choice should follow the workflow’s needs, not just the cloud vendor the company already uses. A narrow, low-risk project can start with a simpler framework. A regulated or high-visibility workflow often benefits from a managed platform that already includes monitoring, identity, and governance features.

Step 4: Build and Test in a Sandbox

The first build should run in a sandbox, not against live production systems. Use test documents, synthetic records, masked data, or low-risk internal examples. At this stage, the goal is not only to see whether the agent produces an answer. The goal is to see how it behaves step by step.

Measure response time, tool use, answer quality, failure recovery, and whether the system stays within the intended workflow. Pre-production evaluation should be treated as a formal part of the lifecycle, not a quick spot check before release.

This is usually the stage where hidden problems appear. One agent may receive too much context and slow down. Another may call the wrong tool. A retrieval step may return poor data. A chain of agent calls may work correctly for normal inputs but fail on edge cases. Testing in a sandbox gives the team a safe place to find these issues before they affect customers or business operations.

Step 5: Instrument Observability Before Production

Observability means being able to see what the system did, why it did it, how long it took, how much it cost, and where it failed. In agentic AI orchestration, this is essential because the system often makes multiple model calls, tool calls, and routing decisions in one run. If observability is added late, debugging becomes slow and expensive.

The team should capture at least five things from day one. Execution traces show the order of steps in a run. Logs show important events and errors. Latency tells you where the workflow is slow. Token and model usage reveal cost drivers. Quality signals show whether answers are grounded, useful, and safe.

Without these signals, a broken workflow often looks like a mysterious bad answer instead of a visible routing, retrieval, or tool problem.

Step 6: Define Human-in-the-Loop Checkpoints

Human review should be designed into the workflow before launch. Do not treat it as a vague fallback. The team needs to decide which actions can run automatically and which actions must pause for human approval. Approval can happen before a tool call, before a sensitive action, or before the final answer is sent.

This matters most in high-stakes workflows. Examples include financial approvals, identity verification, legal review, health-related decisions, policy exceptions, and any action that changes data in an important business system. Human checkpoints are also useful when the task includes subjective judgment, because a model may produce a plausible answer without applying the right business standard. In those cases, the human reviewer is not just checking quality. The reviewer is part of the control design.

Step 7: Deploy, Monitor, and Iterate

The first production release should be treated as a controlled learning phase. Start with a small user group, a limited workflow, or a low-risk operating window. Watch for routing mistakes, poor tool choices, rising latency, repeated failures, and cost spikes.

Compare real production behavior with test behavior, because many agent systems perform well in controlled examples and then drift when faced with messy real inputs. Post-production guidance focuses on continuous monitoring, sampled evaluation of live traffic, scheduled evaluation against test sets, and regular rechecks for quality and safety.

Over time, most improvements happen in four areas. Teams refine agent roles so each agent has a narrower job. They tighten memory boundaries so the system keeps what is useful and drops what is noisy. They improve tool permissions so agents can act safely without being over-privileged. They update routing rules and prompts based on actual failure patterns. Agentic AI orchestration should be treated as an operating system for workflows, not as a one-time feature release. The system becomes better through repeated observation, adjustment, and evaluation.

Leading Agentic AI Orchestration Tools and Frameworks

The current market for agentic AI orchestration breaks into three practical groups. Open-source frameworks are built for teams that want to control orchestration logic in code. Cloud-native platforms reduce infrastructure work by bundling runtime, tools, memory, and observability. Enterprise platforms sit above that layer and focus on governance, interoperability, cataloging, and policy control across a larger agent estate.

>> Selecting an AI Agent Development Outsourcing Company in Vietnam

Open-Source Frameworks

LangGraph

- LangGraph is a low-level orchestration framework and runtime for long-running, stateful agents. It centers on durable execution, streaming, memory-aware execution, and human-in-the-loop control. In simple terms, it is a framework for developers who want to define how an agent system moves from state to state rather than rely on a prebuilt orchestration layer.

- Best fit: LangGraph fits teams that need custom orchestration for workflows with checkpoints, retries, pauses, approvals, or recovery after failures. It is especially suitable when orchestration itself is a core engineering concern.

- Strengths: The main strength is control. Teams can model explicit transitions, persistent state, and long-lived execution paths in a way that is closer to workflow infrastructure than to a chatbot toolkit. That makes LangGraph attractive for complex internal systems, custom enterprise automation, and cases where auditability of execution matters.

- Watchouts: LangGraph is not the easiest entry point for a beginner team. The same low-level control that makes it powerful also means more architecture decisions, more implementation effort, and fewer built-in business-level abstractions than a managed platform.

CrewAI

- CrewAI organizes orchestration around two concepts. Crews are collaborative groups of agents, while Flows are structured, event-driven workflows that manage execution, state, and persistence. The easiest way to understand CrewAI is that it gives more built-in structure than a raw orchestration framework while still letting developers own the system design.

- Best fit: CrewAI fits teams that want multi-agent coordination with clearer scaffolding, but that still want to keep development in code and retain direct ownership of workflow behavior. It is well suited to internal automation projects, prototypes that need to grow, and teams that want shared state plus event-driven control.

- Strengths: The platform’s clearest advantage is conceptual simplicity. Crews handle collaboration. Flows handle deterministic execution and state. That separation is useful for teams new to the space because it reduces some of the design ambiguity that appears in lower-level frameworks.

- Watchouts: CrewAI still expects teams to design and run their own architecture. It does not remove the need to think carefully about routing logic, failure handling, deployment, and governance around production use.

AutoGen and Microsoft Agent Framework

- AutoGen remains an important reference point because it helped define popular multi-agent collaboration patterns. For current planning, Microsoft Agent Framework is the more relevant platform. It combines agent abstractions with graph-based workflows, type-safe routing, session-based state management, middleware, memory, MCP integration, and human-in-the-loop support.

- Best fit: Agent Framework fits teams already aligned to the Microsoft ecosystem that want a modern coding framework for multi-agent workflows. It is especially relevant when developers want explicit workflow control with Microsoft-adjacent tooling and runtime options.

- Strengths: Agent Framework is now the clearer forward path for new Microsoft-aligned orchestration work. It unifies ideas that previously sat across AutoGen and Semantic Kernel and adds workflow control for long-running and human-mediated processes. That gives buyers a more coherent build path than the earlier split ecosystem.

- Watchouts: Agent Framework is still in public preview, so buyers should treat it as forward looking rather than fully settled infrastructure.

LlamaIndex Workflows

- LlamaIndex Workflows is an event-driven, step-based orchestration layer. Workflows are made of steps that consume events and emit new events, and the framework includes instrumentation for observing execution. It is easier to think of this as a modular event engine for agents and retrieval flows rather than a full enterprise control plane.

- Best fit: It fits developers who want a lighter orchestration model and prefer composing steps and events. It is a natural fit for teams already using LlamaIndex for retrieval, extraction, or agent workflows.

- Strengths: Its main strength is simplicity at the orchestration layer. The event model is clear, the abstractions are developer-friendly, and the framework supports both linear and more complex branching or looping workflows without forcing a large platform commitment.

- Watchouts: LlamaIndex Workflows is lighter than many competing platforms. Teams still need to assemble more of the surrounding production stack themselves, especially around governance, broader platform integration, and enterprise-level controls.

Semantic Kernel

- Semantic Kernel includes orchestration patterns such as concurrent, sequential, handoff, group chat, and Magentic-style coordination inside its agent framework. That makes it relevant for teams already building on the broader Semantic Kernel stack.

- Best fit: It fits organizations already invested in Semantic Kernel concepts and packages, especially when they want orchestration capabilities as part of a larger Microsoft-oriented application stack.

- Strengths: The framework exposes multiple orchestration patterns through a consistent programming model. That is useful for teams that want to experiment with different collaboration modes without switching frameworks.

- Watchouts: The most important caution is maturity. Agent orchestration in this framework is labeled experimental and subject to significant change. For buyers who want the most stable long-term orchestration foundation, that makes it harder to treat Semantic Kernel orchestration as the primary bet.

Cloud-Native Platforms

Amazon Bedrock Multi-Agent Collaboration

Amazon Bedrock uses a supervisor and collaborator model. A supervisor agent handles planning, orchestration, and user interaction, then delegates work to collaborator agents that can operate in parallel. This is a managed hierarchical orchestration model inside AWS.

- Best fit: It fits organizations already committed to AWS that want managed multi-agent coordination without building orchestration logic from scratch. It is especially suitable for teams that prefer a clear supervisor pattern and want faster time to deployment.

- Strengths: The main strength is managed structure. Bedrock makes it straightforward to assemble a specialist team of agents under centralized control, which is useful for complex tasks that benefit from delegation and parallel work.

- Watchouts: The structure is intentionally opinionated. Bedrock currently limits a supervisor to up to 10 collaborator agents, and agents customized with custom orchestration are excluded from this collaboration mode. Buyers should read that as strong convenience with less architectural freedom than code-first frameworks.

Microsoft Foundry Agent Service

Foundry Agent Service is a fully managed platform for building, deploying, and scaling agents. It supports prompt agents, workflow agents, and hosted agents. It also includes managed tools such as web search, file search, memory, code interpreter, MCP servers, tracing, evaluation, identity, and publishing into Microsoft surfaces.

- Best fit: It fits organizations that want a managed runtime and enterprise distribution layer with room for both low-code and code-based orchestration.

- Strengths: The strongest commercial insight is breadth. Foundry covers no-code prompt agents, declarative workflow agents with branching and approvals, and hosted code-based agents that can use external frameworks. That makes it one of the more flexible managed environments for mixed enterprise teams.

- Watchouts: Some capabilities remain in preview, including workflow agents and hosted agents. Foundry is most compelling when an organization wants Microsoft identity, security, and distribution surfaces as part of the orchestration decision.

Google Vertex AI Agent Builder

Vertex AI Agent Builder is an open and comprehensive platform for building, scaling, and governing enterprise agents. It combines the Agent Development Kit, Agent Engine runtime, A2A interoperability, session management, memory services, code execution, and managed deployment.

- Best fit: It fits organizations that want managed infrastructure but do not want to give up development flexibility. It is particularly relevant for teams that care about open agent communication standards, heterogeneous framework support, and explicit runtime services for sessions and memory.

- Strengths: Vertex’s clearest advantage is openness plus runtime depth. It supports ADK for multi-agent workflows, promotes A2A as an interoperability layer, and also allows deployment paths for other frameworks. Pricing visibility is also stronger than usual because the platform publishes separate billing for runtime, code execution, sessions, and Memory Bank.

- Watchouts: Vertex is broader than a simple agent builder, which is a strength, but that also makes it more complex to evaluate. Some A2A-related features are still in preview.

Enterprise Platforms and Control Planes

IBM watsonx Orchestrate

IBM watsonx Orchestrate is an enterprise orchestration platform for building, deploying, and scaling AI agents across business workflows. It combines multi-agent orchestration, no-code and pro-code agent building, governance, observability, and integration with business systems and data. The platform includes a catalog of more than 100 domain-specific AI agents and more than 400 prebuilt tools.

- Best fit: It fits enterprises that care less about hand-coding orchestration infrastructure and more about getting governed agents into real business operations quickly.

- Strengths: The strongest value proposition is operational readiness. The platform connects agents, workflows, tools, and systems with security, openness, and control already built in, which is attractive for larger enterprises trying to move from pilots to deployed business automation.

- Watchouts: Teams seeking maximum low-level orchestration freedom may find IBM less attractive than code-first frameworks. The product is aimed more at enterprise execution and governance than at custom orchestration engineering.

Salesforce MuleSoft Agent Fabric

MuleSoft Agent Fabric is a control plane for discovering, orchestrating, governing, and observing agents and MCP servers across ecosystems. Its model centers on a registry for assets, agent brokers for routing and delegation, gateway-level policy controls that support MCP and A2A, and visibility through visualization and monitoring tools.

- Best fit: It fits enterprises dealing with agent sprawl across multiple vendors and runtimes. It is especially useful when the real problem is not building one agent, but managing many agents, many MCP endpoints, and many policy boundaries across different platforms.

- Strengths: The strongest commercial insight is cross-ecosystem control. Agent Fabric is built for environments where agents may already exist across multiple cloud platforms and where discovery, policy, and routing are now the bigger challenge than model access itself.

- Watchouts: MuleSoft Agent Fabric makes the most sense when there is already enough complexity to justify a control plane. Smaller teams or greenfield projects may not need this layer yet, because its value rises as the number of agents, runtimes, and governance requirements grows.

ServiceNow AI Control Tower

ServiceNow AI Control Tower is a centralized hub for managing and governing AI models, agents, and workflows across the enterprise. It ties AI initiatives to business services and technology context, automates AI workflows, manages risk, and measures AI impact in real time.

- Best fit: It fits organizations moving from experimentation to enterprise scale, where visibility, governance, lifecycle management, and accountability are becoming more important than low-level orchestration design. It is especially relevant in environments with strong compliance and risk requirements.

- Strengths: Its strength is enterprise oversight. The platform is built to centralize governance and management across native and third-party AI systems, which is useful for leadership, operations, security, and risk teams that need one place to understand what is deployed and how it is performing.

- Watchouts: AI Control Tower should not be read as a pure orchestration framework in the developer sense. It is more about governance, visibility, and control over AI operations than about authoring detailed multi-agent routing logic.

Adobe Experience Platform Agent Orchestrator

Adobe Experience Platform Agent Orchestrator is the agentic layer inside Adobe Experience Platform. It powers specialized Adobe agents behind a conversational interface, retains conversation history, combines results from multiple agents, and supports human oversight. The platform highlights transparent workflows that expose reasoning logic, SQL queries, and conversation history.

- Best fit: It fits Adobe-centered organizations that want agent orchestration inside customer experience, marketing, and data workflows rather than a general-purpose platform for every enterprise domain.

- Strengths: The product’s strongest point is stack alignment. For teams already using Adobe Experience Platform, the orchestration layer sits close to the data, customer context, and expert agents those teams already work with. The transparency features are also notable because they make execution history and system behavior more visible to users.

- Watchouts: The clear boundary is scope. Adobe Experience Platform Agent Orchestrator is not trying to serve as a universal orchestration framework. Its value is highest inside the Adobe ecosystem, and much lower outside it.

Specific Use Cases

Financial Services: KYC and AML Compliance

Agentic AI orchestration is well suited to KYC (know your customer) and AML (anti-money laundering) workflows because the work is document-heavy, policy-constrained, and escalation-prone. A practical design uses a sequential core with hierarchical oversight.

- A document intake agent extracts fields and entities.

- An identity agent validates records.

- Screening agents check sanctions, politically exposed persons lists, and adverse media.

- A risk agent assembles the case and scores it.

- A supervisor keeps task state, enforces ordering, and routes edge cases to a human reviewer.

This kind of collaborative agent system can automate more than 50 percent of traditionally manual tasks, including document intake, sanctions screening, entity resolution, and investigation. The measurable outcomes include increased efficiency, consistency, and faster decisions in negative news screening.

Customer Experience: Omnichannel Support Orchestration

Agentic AI orchestration fits omnichannel support when requests arrive through chat, messaging, voice, web forms, or email and require dynamic routing. The underlying pattern is event-driven, often with a structured handoff layer.

- A channel event triggers a triage agent that classifies intent.

- Retrieval agents pull policy and order data.

- Resolution agents handle common tier-one actions such as returns, order status, or account tasks.

If the problem exceeds policy or confidence thresholds, the workflow hands off to a human support agent with the full interaction history intact.

The orchestration layer focuses on routing to specialized domains while maintaining context. The measurable outcome is lower response time, higher self-service resolution, and fewer customers forced to repeat context across channels.

DevOps and IT Operations: Automated Incident Response

Agentic AI orchestration supports automated incident response by combining event-driven activation with parallel analysis and controlled remediation.

- An alert or system event triggers the workflow.

- Diagnostic agents inspect logs, metrics, and recent deployment changes.

- Infrastructure agents query system state.

- Rollback or remediation agents prepare safe actions.

- A communication agent updates stakeholders and incident channels.

The business outcome is direct: autonomous investigation begins when alerts trigger and can reduce MTTR (mean time to resolution) from hours to minutes while generating recommendations that prevent repeat incidents. In higher-risk environments, a human approval gate sits between proposed remediation and execution so the workflow remains auditable and bounded.

Read more:

– Agentic AI In-Depth Report 2026: The Most Comprehensive Business Blueprint

– Technology Implementation: What It Actually Involves and How to Get It Right

– Cloud Migration in Australia: What Businesses Need to Know in 2026