Agentic AI architecture is the system design that lets a generative model work through a task over time instead of responding once and stopping. The model takes in context, interprets a goal, chooses what to do next, uses tools when needed, and carries useful state from one step to another. The focus here is the structure around the model that makes this kind of behavior possible.

Once a system has to manage progress, hold context, and act through several linked steps, architecture moves to the center of the discussion.

The Origins of Agent-Based Thinking

The idea behind this did not start with large language models, or LLMs. An LLM is a model trained on very large text datasets so it can process and generate language. Long before that, software agents were already understood as systems that could observe an environment, reason about a goal, and take action.

What changed with LLMs was not the basic idea of agency, but the practicality of building it into general software systems. Natural language became usable as an interface for planning, decomposition, routing, and tool selection, which made it possible to design systems that could handle more open-ended work without hardcoding every path from the start.

Research Milestones That Shaped the Field

MRKL Systems, published in 2022, proposed a modular neuro-symbolic design that combines large language models with external knowledge sources and discrete reasoning modules. Neuro-symbolic means combining neural network-based AI with structured reasoning components.

ReAct, also in 2022, made the loop more concrete by showing how a model can reason, act, observe the result, and continue from there.

Toolformer, published in 2023, showed that a model can learn when to call tools, which tools to call, and how to use the returned results in later output. HuggingGPT then turned the controller idea into a more visible system pattern, with one model planning tasks, choosing specialized models, coordinating execution, and bringing results back together.

The Core Design Problem

A standalone generative model can answer questions, summarize documents, and draft text. The design challenge appears when the system must retain information across steps, choose tools dynamically, recover when something fails, split work into subtasks, or operate safely inside real environments.

At that point, a fixed workflow and an agentic system begin to separate. In a workflow, the path is decided in advance. In an agentic system, the model helps direct the path based on what it sees as the task unfolds. Agentic AI architecture is the structure that holds that process together.

What Agentic AI Architecture Actually Means

Agentic AI architecture is the structural design of a system that gives a generative model controlled access to planning logic, memory, tools, execution loops, state transitions, governance layers, and feedback from the environment.

State here means the information the system carries from one step to the next, such as task progress, intermediate results, and prior observations. Governance layers are the controls that limit what the system can do, what it can access, and when it must stop or ask for review.

Why the Definition Matters in Practice

Many teams attach a language model to a prompt, a chat interface, or a simple tool call and describe the result as an agent. Architecturally, that is not enough. A system becomes agentic when it can maintain progress toward a goal across multiple steps, decide when an external tool is needed, update its own state after each action, and continue operating inside clear limits of autonomy.

A generative model becomes agentic only when it is placed inside an architecture that gives it controlled access to planning, memory, tools, execution loops, state transitions, governance, and environmental feedback.

Once you look at the problem this way, the most important design choices are architectural. These include which components exist and how those components connect, what information is passed forward, what is stored and what is discarded, which tool calls are allowed, what happens when a step fails, where the system pauses, and how it resumes.

These decisions determine whether the system can act coherently over time or whether it breaks as soon as the task becomes longer, messier, or more sensitive.

From Generative Model to Agentic System

How a Generative Model Operates by Default

A generative model receives an input, processes that input, and returns an output in one pass. Once that pass is complete, the model does not retain memory of earlier turns unless the earlier exchange is deliberately included again in the next prompt. On its own, it does not loop through a task, branch into alternate paths, or retry after failure.

Its behavior is stateless, single-pass, and limited by its context window, which is the maximum amount of text it can process in one interaction.

What Must Be Added to Produce Agentic Behavior

To move from that baseline to an agentic system, the surrounding design has to add capabilities the model does not have by itself.

The first is goal representation, which means the system must hold and track what it is trying to accomplish over time, not just what the latest user message says.

The second is a planning or reasoning mechanism that can break the goal into steps and decide which step should happen next.

The third is conditional tool use, where the model can determine whether it needs a tool, choose the right one, and use the result in the next step.

The fourth is state persistence, which means information from one step is stored and carried into the next step in a controlled way.

A useful starting point for this transition is the augmented LLM, which extends the model with retrieval, tools, and memory before more complex architectural layers are added.

The Execution Loop as an Architectural Pattern

The key mechanism that connects these capabilities is the execution loop. ReAct, short for Reasoning and Acting, made this pattern explicit by structuring the system as a cycle. The model generates a reasoning step, takes an action, observes the result of that action, and then reasons again using the new observation. This continues until the goal is reached or the system hits a stopping condition.

That pattern requires an engineered loop around the model that can capture outputs, run actions, collect observations, store intermediate state, and feed the updated context back into the next model call.

Engineering Implications of the Shift

The architectural shift becomes significant the moment the system needs to remember, branch, retry, or act on external systems. At that point, the team has to decide where state will be stored, how tools are exposed, what happens when an action fails, how many iterations are allowed, how latency grows with each step, how cost accumulates across repeated model calls, and when the system should stop and ask for human input.

Core Layers of Agentic AI Architecture

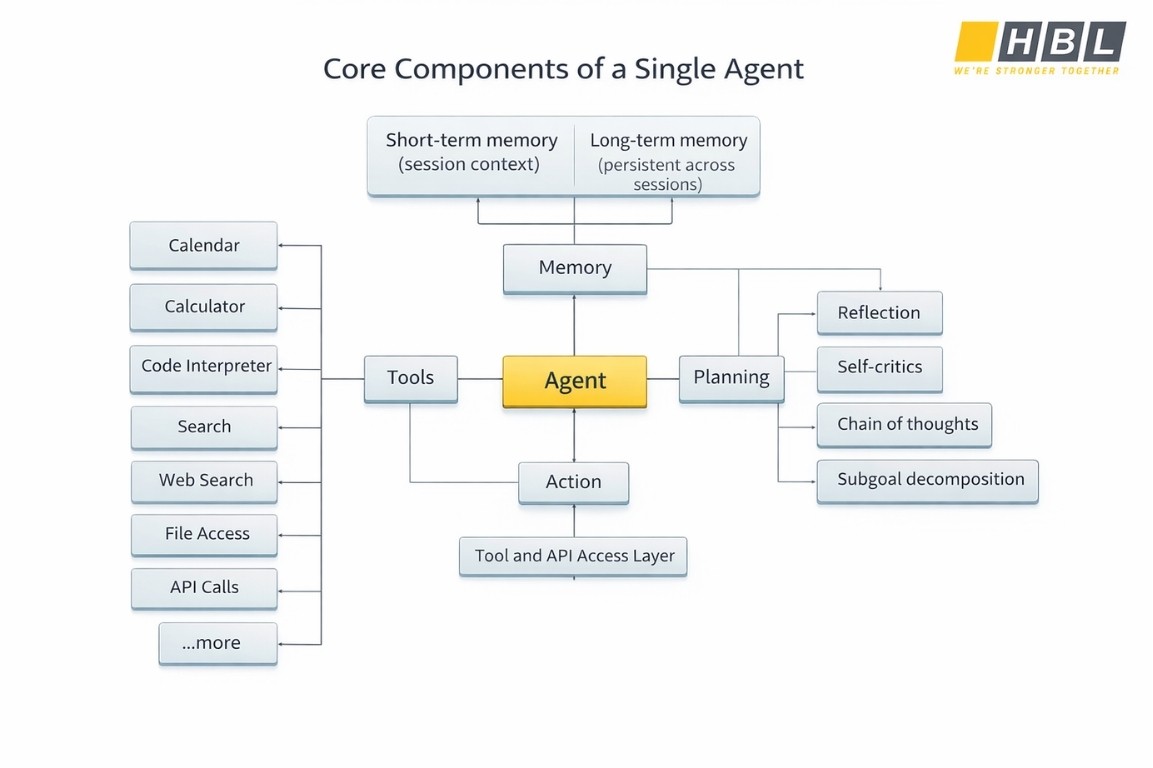

The Reasoning Core

The reasoning core is the part of the system that turns a user request or system goal into a sequence of decisions. It interprets what the task is, identifies what information is missing, decides whether a tool is needed, and determines what should happen next at each step.

In a generative model alone, that process is unstable because the model only sees the prompt in front of it. In agentic AI architecture, the reasoning core is given a more structured operating frame. The system passes in the goal, the relevant state, the available tools, and the rules of execution in a way that lets the model reason with boundaries instead of improvising without control.

Without that structure, reasoning turns into hallucination, task drift, or unnecessary tool use because nothing holds the model to the real state of the task.

The Control Layer

The control layer determines what the system does after each intermediate result: whether the next step should stay with the same agent, move to another agent, call a tool, wait for input, or stop.

In a simple single agent design, the model often handles both reasoning and control inside one loop. As tasks grow more complex, that arrangement breaks down. A separate coordinator, planner, or workflow runtime is then introduced to manage decomposition and routing more explicitly.

Common patterns move from a single agent to coordinator-based designs, then to hierarchical task decomposition, and finally to swarm-style collaboration where many specialized agents communicate more freely. Without this layer, multi-step work becomes fragile. Tasks repeat, branches are taken without enough justification, and context is passed forward without a clear rule for what should be preserved or discarded.

Memory and State

State is the working data of the current run. It includes the current goal, the progress made so far, the outputs of previous steps, and any intermediate values the system still needs. Memory is the mechanism that stores and retrieves information so that the system can keep continuity over time.

Without state, the system cannot act coherently across steps because every action would be treated as if it were the first one. Without memory, the system cannot sustain coherence across turns, sessions, or long-running tasks.

Short-term memory, also called in-context or session memory, holds the information needed inside a single run or conversation. It usually includes message history, tool outputs, intermediate decisions, retrieved documents, and other active context.

Long-term memory, also called persistent memory, holds information across sessions and stores it outside the immediate model call so it can be retrieved later when relevant. The choice between these two is made by the system designer. Unmanaged context growth leads directly to higher cost, slower runs, and degraded reasoning quality.

Tool and Action Interfaces

A tool is any callable external capability the model can use, such as a search API (Application Programming Interface), a database query, a browser action, a file retrieval function, or a code execution environment. Tools are what make an agentic system operational. They let the system fetch evidence, update records, run calculations, inspect files, or trigger business actions.

Toolformer marked an important step by showing that a model can learn not only how to use a tool, but also when to use it, which tool to select, what arguments to send, and how to incorporate the returned result into the next stage of reasoning.

Tool Interface Design Principles

Tool performance depends heavily on interface design. A model relies on the name of the tool, the description of what it does, the structure of its inputs, the clarity of its outputs, and the boundaries between one tool and another. When tools overlap too much, the model routes poorly. The most reliable tool interfaces follow these design principles:

- Clear names that signal purpose without ambiguity

- Non-overlapping scope so each tool has a distinct job

- Explicit input and output schemas so the model knows what to send and what will come back

- Detailed descriptions that explain when the tool should be used and when it should not

- Fallback behavior for empty results, tool failure, or missing parameters

Environment Feedback Loops

The environment feedback loop is one of the clearest differences between agentic AI architecture and a classic generative deployment. In a single-pass use case, the model produces an answer and stops. In an agentic system, the model acts, receives a result from the environment, updates its internal state, and decides what to do next based on that observation.

The environment might be a database, a business application, a code runner, a search service, or any external system that can confirm whether the last action succeeded, failed, or returned incomplete information. Without feedback from the environment, the model has no reliable way to assess progress. Tool results and execution outcomes are the ground truth that agents need to self-correct, iterate, and recover from errors instead of compounding them silently across steps.

Governance, Safety, and Observability

Governance defines what the system is allowed to do, what resources it may access, what actions require approval, and what boundaries it cannot cross. Safety controls inspect inputs, intermediate outputs, tool calls, and final results so that harmful, irrelevant, or out-of-scope behavior is filtered before it spreads through the workflow. Observability means the system emits structured records of what happened during execution, including logs, traces, metrics, latency measurements, token use, cost signals, retries, failures, and quality evaluations attached to each step.

In agentic AI architecture, observability is especially important because the final answer alone rarely reveals where a problem occurred. An error may come from bad retrieval, wrong routing, stale state, tool misuse, or uncontrolled context growth. Without traces and metrics, all of those failures collapse into one vague bad output.

Single Agent and Multi Agent Architecture

A single agent architecture is the minimum viable form of agentic AI architecture. One model, one system prompt, one toolset, one memory strategy, and one execution loop form the complete system. That is often the right architectural choice, not a temporary simplification.

Current design guidance recommends starting at the lowest level of complexity that can reliably solve the task because added coordination increases latency, cost, and failure surface. A single agent system already contains the core layers of agentic behavior: a reasoning core, tool access, memory, a feedback loop, and safety controls. When one reasoning loop can manage the task without losing coherence, that structure is easier to test, easier to monitor, and easier to improve.

Structural Limits of Single Agent Design

The shift away from a single agent does not begin when the model becomes weaker. It begins when the structure of the task becomes too large or too mixed for one agent to manage cleanly.

A single agent architecture starts to strain when the task no longer fits inside one context window, when the workflow needs several kinds of expertise at the same time, when one wrong step affects the entire run because there is no separation between specialist functions, or when the result should be checked independently rather than produced and accepted by the same reasoning loop. These are architectural limits, not model limits.

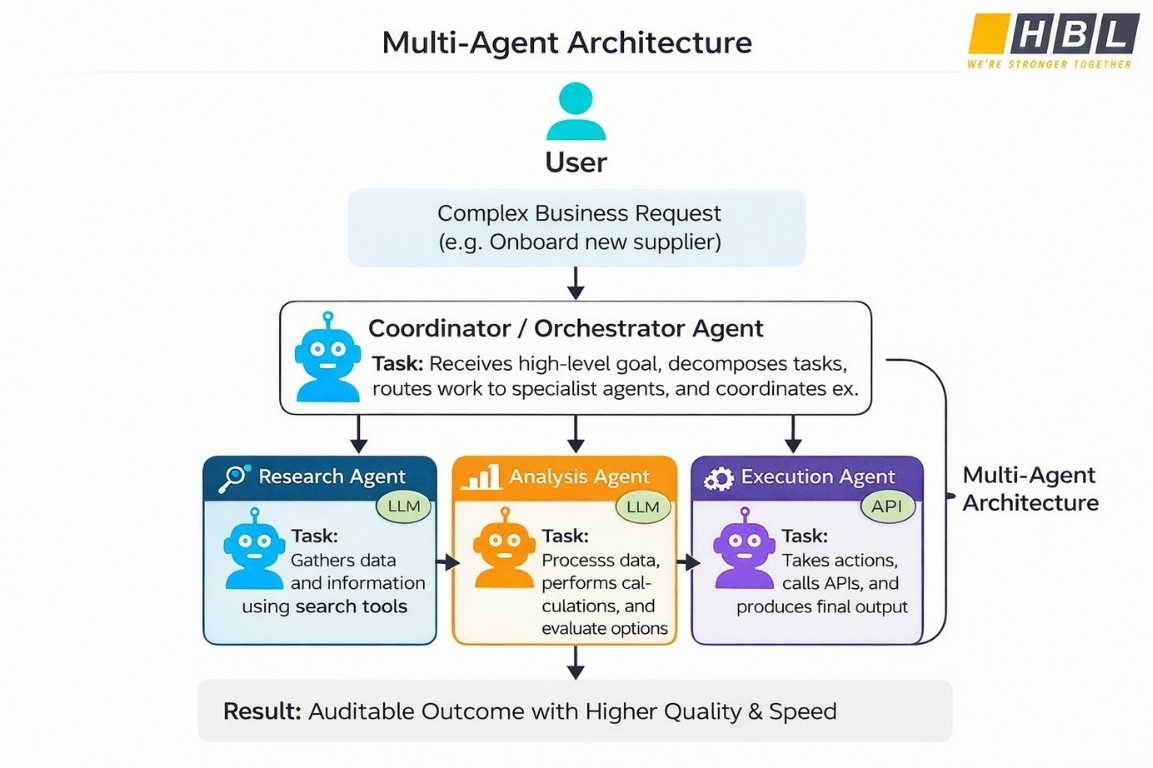

Multi Agent Architecture

Multi-agent architecture is best understood as the scaling form of single agent design. The question changes from how one agent reasons to how multiple agents are structured, isolated, and coordinated. The system may use a coordinator pattern in which one agent routes work to specialist subagents, a hierarchical pattern in which tasks are decomposed across several levels, or a swarm pattern in which several agents work in parallel with shared context and explicit stopping conditions.

Teams usually have a strong reason to move to multi-agent architecture when one or more of these conditions are present:

- The task is too complex for one reasoning loop to manage reliably

- The workflow needs distinct specialist roles with different tools or context

- Parallel execution materially improves speed or coverage

- Independent verification improves trust in the final result

The Cost of Coordination

Every extra agent boundary introduces a cost that single agent systems do not carry. Context must be passed from one agent to another, which creates opportunities for information loss, ambiguity, or mismatch between instructions and state. Each handoff adds latency and a new point where the workflow can fail.

The question of what is the best architecture for managing an AI agent has no universal answer. The best choice depends on the shape of the task, the level of reliability required, the team’s ability to operate the system, and the amount of ongoing cost and complexity the organization is willing to absorb.

Agentic Frameworks

Agentic frameworks are design architectures that define how an agent perceives its environment, processes information, makes decisions, and takes action. In agentic AI architecture, this is one of the earliest and most important choices because it determines the agent’s reasoning model before any implementation layer is selected.

The main framework types are best understood in order of increasing complexity: reactive, deliberative, and cognitive. The BDI framework, short for Belief-Desire-Intention, sits across the deliberative and cognitive range because it gives a more explicit structure for rational decision-making based on internal state.

Reactive Architectures

A reactive architecture is the simplest form of agent design. It maps inputs from the environment directly to outputs without building an internal plan, consulting a world model, or storing a meaningful record of past events. The agent detects a condition, executes a corresponding response, and waits for the next condition.

Because there is no internal reasoning layer shaping future action, reactive systems are fast, direct, and easy to predict. That same structure also limits them. A reactive agent cannot plan a multi-step path toward a later goal or adjust its behavior based on a richer understanding of the world. In agentic AI architecture, reactive designs fit narrow, repeated, high-speed tasks where simplicity and consistency matter more than adaptation.

Deliberative Architectures

A deliberative architecture does not act immediately on first perception. Before taking action, it analyzes its environment, consults or constructs an internal model of the world, considers possible future outcomes, and then selects the action that best supports its goal. This is a plan first, act second design.

The key difference is that the agent is no longer responding only to the present stimulus. It is reasoning over possible next states and choosing among alternatives. The tradeoff is that reasoning takes time and computational effort. In agentic AI architecture, deliberative systems are slower than reactive ones but far better suited to tasks where the agent must think ahead rather than only react.

Cognitive Architectures

A cognitive architecture extends this idea by modeling a broader range of mental functions through separate modules. These functions include perception, which is the ability to receive and interpret signals from the environment; memory, which includes short-term working memory and long-term stored knowledge; reasoning, which is the process of drawing conclusions and forming judgments; and adaptation, which is the ability to revise behavior based on experience, feedback, or environmental change.

Because these functions are modular, each can be designed, tested, and improved without changing the entire system at once. Cognitive architectures are suitable for complex and uncertain environments where the agent must interpret ambiguous inputs, revise earlier assumptions, and continue operating across different task types. In agentic AI architecture, cognitive designs are the most advanced category because they aim for broad competence under changing conditions.

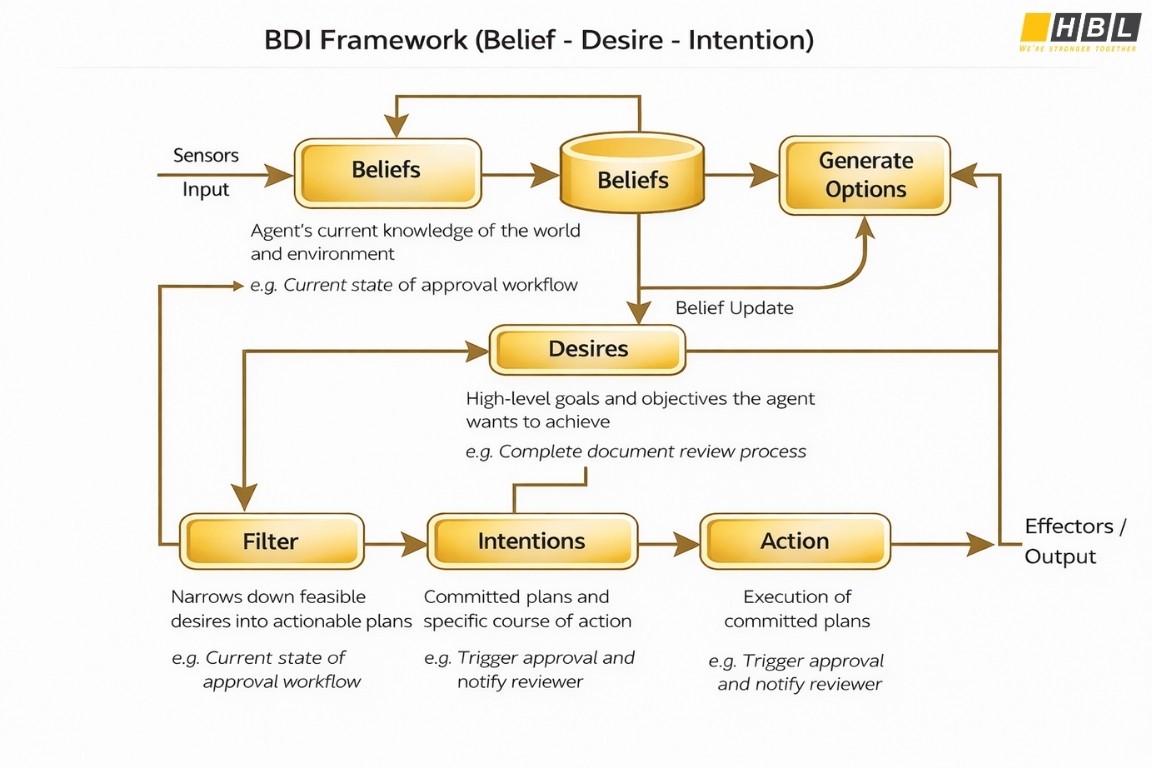

The BDI Framework

The BDI framework gives this internal structure a more explicit decision model. BDI stands for Belief-Desire-Intention, and it is designed to model rational action in an intelligent agent. It belongs with deliberative and cognitive architectures because it combines an internal model of the world with structured goal reasoning and committed plans.

What makes it especially useful is that it separates what the agent knows, what it wants, and what it has decided to do, instead of collapsing all three into one undifferentiated state. The three components are:

- Beliefs are the agent’s current knowledge of the world, including the state of its environment and any sensed or retrieved data. Example: “The approval workflow has not been triggered.”

- Desires are the agent’s goals or objectives at a high level, representing what it is trying to achieve rather than the exact steps it will take. Example: “Complete the document review process.”

- Intentions are the specific course of action the agent has committed to based on its current beliefs and desires. Example: “Trigger the approval workflow and notify the assigned reviewer.”

Why BDI Matters Architecturally

An agent with desires and intentions but weak belief updating will act on stale or incomplete information. An agent with beliefs and intentions but no coherent goal structure may complete steps efficiently without knowing whether those steps serve the actual objective.

A BDI design forces the system to connect current knowledge, desired outcome, and committed action in a disciplined way. That maps well to agentic AI architecture because real agentic systems must reason over goals, maintain state, and act with bounded autonomy.

These framework types sit above the implementation layer. Software frameworks such as LangGraph, CrewAI, and AutoGen help operationalize these architectural models in code, while managed platforms such as Amazon Bedrock Agents, Azure AI Foundry Agent Service, and Vertex AI Agent Builder provide runtime, memory, and observability support for the framework type a team has chosen.

The architectural framework comes first because it defines how the agent should reason and decide. The software framework and platform are implementation decisions that follow from that choice.

Designing Agentic AI Architecture

Step One: Understand the Shape of the Workload

The first design decision in agentic AI architecture is the shape of the workload.

A deterministic workload follows a fixed sequence of steps with predictable inputs and outputs, so a direct model call or a predefined workflow is often enough. An adaptive workload changes path based on what the system observes, so the architecture needs conditional routing and state updates between steps. An open-ended workload begins with a goal that is underspecified, which means the system must decompose the goal into tasks without a fully predetermined path. An event-driven workload begins with an external trigger such as a message, alert, upload, or transaction and must respond within defined timing or resource limits.

The structure of the work determines whether the system needs a single reasoning loop, a dynamic coordinator, or a more reactive event-based design.

Step Two: Choose the Minimum Viable Level of Complexity

Start with the simplest architecture that can solve the task reliably, then add coordination only when the task actually requires it. A direct generative call is enough for many single-step tasks. A fixed workflow is enough for many multi-step tasks where the path is known in advance.

Agentic AI architecture becomes justified when lower-complexity designs stop being reliable. The clearest signs that a more agentic design is warranted are:

- The system must maintain progress toward a goal across multiple steps rather than answer once and stop

- The system must use external tools or data sources conditionally instead of following a fixed scripted call path

- The system must make adaptive decisions based on intermediate results or environmental feedback

- The system must execute a task through loops, retries, or branching steps that cannot be fully specified in advance

>> Minimum Viable Product (MVP): Definition, Examples

Step Three: Define System Boundaries

Boundary design is one of the most important parts of agentic AI architecture because it determines what the system can reach, what it can remember, when it must stop, and which component is responsible for each class of decision.

Tool boundaries define which tools an agent can access and how clearly each tool’s role is separated from the others. Poorly named or overlapping tools increase routing errors. Memory boundaries define what persists, where it is stored, and which agents or services are allowed to read or update it. Approval boundaries define where the execution loop must pause for human review, such as before a sensitive action or after a high-risk result. In multi-agent systems, responsibility boundaries define which agent owns which decisions, which helps prevent duplicated reasoning, context bloat, and ambiguous handoffs.

Step Four: Define Operational Design

Architecture on paper becomes real architecture only when operational design is defined at the same level of detail as reasoning and control.

State persistence strategy determines where state is stored, how long it lives, and how the system restores it after interruption or failure. Retry and recovery behavior determines what happens when a tool call fails or a model output falls outside acceptable parameters. Stop conditions define when the system should halt, whether because the task is complete, a quality threshold is not met, a maximum iteration limit has been reached, or a human decision is needed. Evaluation design determines how the team measures correctness, quality, groundedness, safety, and task completion. Observability supplies the traces, logs, metrics, and telemetry that let engineers inspect each step of a run in production.

Metrics such as latency, token consumption, error rates, quality scores, tool use, retries, and agent decisions belong to the operating model rather than to optional debugging extras.

Step Five: Treat Cost and Human Oversight as Architectural Inputs

Cost and human oversight must be treated as architectural inputs from the beginning, not as concerns left for deployment. Every execution loop carries a cost in model calls, tool invocations, memory reads and writes, and latency per step. In multi-agent systems, those costs grow because coordination adds more reasoning steps, more context transfer, and more failure points.

Human oversight works the same way. A system can be designed to act autonomously within a defined boundary, or it can be designed to pause at specific checkpoints and escalate for review when risk, uncertainty, or policy demands it. Those review points must be placed explicitly in the architecture because they affect routing, latency, state handling, and control flow long before the system reaches production.