Leading organizations achieve 1.6x higher success rates by treating AI governance as a continuous learning capability rather than static compliance.

Artificial intelligence has moved beyond experimental deployment into mission-critical business operations. Yet most organizations approach AI governance as a static compliance exercise, a checkbox to satisfy regulatory requirements rather than a strategic capability that enables faster, safer AI adoption at scale.

This fundamental misunderstanding creates what McKinsey researchers identify as a critical divide between Limited Learners and Augmented Learners.

Research demonstrates that organizations treating AI governance as a continuous organizational learning capability achieve 1.6 times greater success rates in AI adoption while simultaneously reducing risk exposure.

Traditional AI governance follows a predictable pattern: establish policies, document procedures, conduct initial assessments, and file reports. Months later, when models drift, edge cases emerge, or regulations evolve, organizations discover their governance framework has become outdated documentation.

Contextual governance transforms this paradigm by embedding learning mechanisms directly into governance processes through continuous feedback loops.

AI Governance as a Dynamic Learning Capability

The shift from compliance-focused to learning-oriented AI governance represents a fundamental reimagining of how organizations approach AI risk management. Limited Learners treat governance as a one-time implementation, establishing policies and procedures that remain largely static until external pressures force updates.

Augmented Learners, by contrast, design governance systems that continuously learn from deployment experiences, regulatory developments, and emerging risks. Organizations that integrate human institutional knowledge with AI-driven insights to manage technological and regulatory uncertainty demonstrate 1.6 times higher success rates in AI implementation, similar to how effective AI strategy consulting approaches transform organizational capabilities.

This performance gap stems from how organizations structure their ai governance learning capability. Leading firms embed learning mechanisms into every governance touchpoint, transforming isolated compliance checks into interconnected feedback systems. Their ai governance organizational context learning capability draws insights from cross-functional teams, regulatory developments, and real-world AI performance data to refine policies dynamically.

Creating Sustainable Competitive Advantage

Consider how feedback loops between AI deployment and governance policy create sustainable competitive advantage. A financial services firm deploying credit scoring models encounters an edge case where the model produces unexpected results for a specific demographic segment.

In a traditional governance model, this triggers a compliance review, model adjustment, and documentation update. In a learning-based governance system, the incident generates multiple knowledge artifacts:

- Updated risk profiles for similar use cases

- Refined fairness metrics that detect comparable patterns earlier

- Training materials that help teams recognize analogous situations

- Policy adjustments that prevent related issues across the entire AI portfolio

From Cost Center to Competitive Differentiator

The transformation of AI governance from cost center to competitive differentiator requires shifting organizational mindset from risk avoidance to intelligent risk management. Organizations demonstrate this shift through several key characteristics:

- Maintain comprehensive AI system inventories that capture business context and risk profiles, not just technical specifications

- Implement real-time monitoring that detects performance drift and emerging issues before they escalate

- Establish cross-functional governance committees that bring together technical, legal, ethical, and business perspectives

- Create feedback mechanisms that systematically capture lessons learned and translate them into improved practices

AI Fluency: The Foundation of Contextual Learning

Organizational AI governance capability depends fundamentally on collective AI fluency, the shared understanding across teams of how AI systems function, their inherent limitations, and the risks they introduce. This extends far beyond technical teams, much like how comprehensive machine learning consulting approaches address organizational capabilities rather than just technical implementation.

Marketing professionals must understand how generative AI tools might introduce biases into customer communications.

Human resources staff need to recognize when AI-assisted screening tools require human oversight.

Finance teams should comprehend how AI models generate forecasts and what assumptions underlie those predictions.

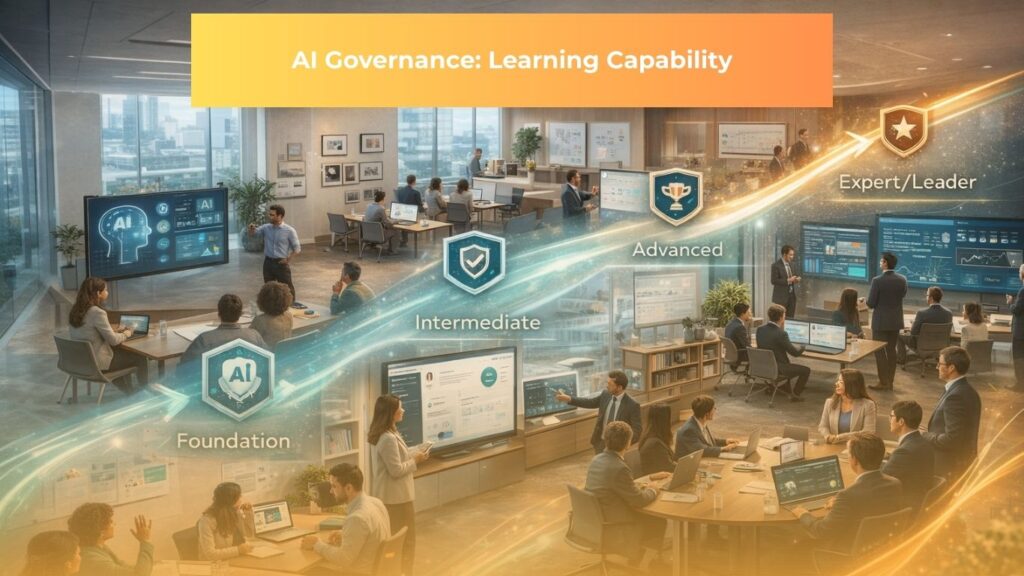

Building robust ai governance learning capability starts with foundational AI literacy across all business functions. Organizations must develop ai governance organizational context learning capability that accounts for role-specific knowledge requirements, departmental risk exposures, and industry-specific compliance obligations.

Role-Specific Competency Development

Research on AI literacy demonstrates that effective programs move beyond generic awareness training to role-specific competency development. A customer service representative needs different AI knowledge than a data scientist.

Organizations that tailor AI education to specific job functions see higher adoption rates and fewer governance violations because training aligns with actual decision-making contexts employees encounter.

AI Ethics Councils: Cross-Functional Collaboration

AI Ethics Councils exemplify how organizations operationalize governance through cross-functional collaboration. These bodies serve as forums where technical expertise meets business acumen, legal knowledge, and ethical reasoning.

High-performing councils establish clear decision rights, defining which AI initiatives require full committee review versus delegated approval. They create escalation paths that balance thorough oversight with deployment velocity.

Measuring AI Fluency Maturity

Metrics for assessing organizational AI fluency maturity provide visibility into whether education programs translate to governance effectiveness. Leading indicators include:

- Rate at which teams proactively engage governance processes rather than circumventing them through shadow AI

- Quality of risk assessments submitted for new AI initiatives

- Frequency of governance-related questions or consultations

Lagging indicators measure actual outcomes:

- Incidence of governance violations

- Time required to resolve ethical concerns

- Success rate of AI deployments in production

Implementing Contextual Governance

Context-aware reasoning forms the technical foundation of adaptive AI governance, enabling governance systems to provide situation-specific guidance rather than applying generic rules uniformly across all AI deployments.

Traditional governance approaches categorize AI systems into broad risk tiers. Contextual governance goes deeper, recognizing that identical AI technologies deployed in different business contexts present vastly different risk profiles. A recommendation algorithm suggesting products in an e-commerce platform carries different ethical implications than the same algorithm prioritizing loan applications or medical treatment options.

Effective ai contextual governance business-specific learning capability recognizes these nuances.

Rather than applying universal rules, mature organizations develop ai governance organizational context learning capability that tailors oversight intensity, approval workflows, and monitoring frequencies to specific business contexts.

Multi-Dimensional Risk Assessment

The architecture of contextual governance systems leverages metadata, domain logic, and historical precedents to deliver role-aware, situation-sensitive oversight. When a product team proposes deploying a natural language processing model to automate customer service responses, the governance system queries multiple dimensions:

- What data will train the model and how sensitive is that data?

- Who are the end users and what decisions will the AI influence?

- Are there regulatory requirements specific to this use case?

- What similar deployments has the organization attempted previously, and what lessons did they yield?

Technical Architecture Components

Technical implementation of context-aware governance typically involves several integrated components:

- Centralized AI registry catalogs all AI systems with rich metadata describing their purpose, data sources, affected stakeholders, decision authority, and risk characteristics

- Risk assessment engines evaluate new AI initiatives against contextual information, automatically flagging potential concerns based on learned patterns

- Policy management systems store governance rules alongside the business contexts where they apply

- Monitoring dashboards track AI system performance across multiple dimensions, alerting governance teams when context changes affect risk profiles

Leveraging Feedback Loops for Continuous Improvement

Structured feedback mechanisms transform AI governance from a static compliance exercise into a continuously improving system that learns from every deployment, incident, and assessment. The concept of reflection, learning, and renewal borrowed from organizational development theory applies directly to AI governance.

Organizations implementing ai governance organizational context learning capability capture insights from every AI deployment, incident investigation, and audit finding.

Organizations implement several complementary feedback loops operating at different timescales:

- Real-time monitoring detects immediate performance issues, triggering rapid response protocols

- Weekly or monthly governance reviews assess trends across the AI portfolio, identifying systemic patterns that require policy adjustments

- Quarterly or annual comprehensive audits evaluate whether governance frameworks remain aligned with business strategy, regulatory environment, and technological capabilities

NIST AI Risk Management Framework

The NIST AI Risk Management Framework structures continuous governance improvement through four interconnected functions:

- Govern function establishes organizational culture, roles, and policies that enable effective risk management

- Map function identifies AI system context, including intended purposes, potential impacts, and applicable regulations

- Measure function implements assessments to understand AI system trustworthiness across dimensions like fairness, reliability, and security

- Manage function allocates resources to address identified risks and implements ongoing monitoring

ISO/IEC 42001 Management System

ISO/IEC 42001 provides a complementary framework emphasizing management system principles for AI governance. This standard specifies requirements for establishing, implementing, maintaining, and continually improving an AI management system. The standard’s Plan-Do-Check-Act cycle mirrors the iterative learning approach that distinguishes high-performing AI organizations.

Post-Deployment Audits

Post-deployment audits serve as particularly valuable learning opportunities, revealing how AI systems perform in actual operating conditions. These audits examine:

- Technical performance metrics like accuracy and latency

- Fairness measures across different population segments

- Security incidents or vulnerabilities discovered

- User feedback and satisfaction

- Business value delivered and governance process effectiveness

From Compliance to Competitive Advantage

Mature AI governance learning capability transcends regulatory compliance to become a strategic business differentiator that enables faster innovation, larger-scale deployment, and stronger market positioning. Organizations achieving this transformation discover that rigorous governance paradoxically accelerates rather than constrains AI adoption.

When governance processes are slow, opaque, or inconsistent, teams work around them through shadow IT deployments that circumvent oversight. When governance provides clear guidelines, rapid approval for low-risk initiatives, and transparent decision-making, teams embrace it as an enabler that de-risks innovation, similar to how effective agentic AI implementation requires robust governance frameworks to maximize value.

Building Strategic Trust

Strategic trust emerges when stakeholders gain confidence that an organization deploys AI systems responsibly, ethically, and reliably. This trust manifests across multiple constituencies:

- Customers trust that AI interactions respect their privacy, provide accurate information, and treat them fairly

- Employees trust that AI augments rather than threatens their roles and operates transparently

- Regulators trust that governance frameworks proactively address risks rather than waiting for mandates

- Investors trust that AI initiatives deliver returns while managing downside risks appropriately

Quantifying Governance ROI

Research from EY indicates that organizations with real-time AI monitoring and formal oversight committees achieve:

- 34% higher likelihood of revenue growth

- 65% higher likelihood of cost savings

Governance enables faster, safer scaling by reducing the time spent debugging production issues, recovering from incidents, or navigating regulatory challenges. This virtuous cycle compounds over time, with early governance investments yielding accelerating returns.

Total Cost of AI Ownership

Organizations without mature governance incur substantial hidden costs:

- Extended development cycles as teams navigate ambiguous requirements

- Expensive post-deployment fixes when issues surface in production

- Regulatory fines or legal settlements when systems violate rules

- Reputation damage that reduces customer trust and lifetime value

- Opportunity costs when governance bottlenecks prevent teams from pursuing valuable AI initiatives

Automated Guardrails

Automated, learning-based guardrails dramatically reduce time-to-market for AI initiatives while maintaining appropriate oversight. Automated systems evaluate AI proposals against learned patterns, instantly approving low-risk initiatives that meet predefined criteria while flagging higher-risk projects for human review.

Teams gain predictability, knowing which AI use cases will receive rapid approval and which require deeper scrutiny.

Transform Your AI Governance Approach

The evolution of AI governance from compliance checklist to organizational learning capability represents one of the most critical transformations enterprises must navigate. Organizations that complete this journey gain the ability to deploy AI faster, at larger scale, and with greater confidence.

Strategic investment in AI fluency, contextual governance systems, continuous feedback loops, and governance automation pays compounding returns that extend well beyond regulatory compliance. Organizations building these capabilities today position themselves to capture disproportionate value as AI technologies continue advancing.

The frameworks examined throughout this analysis, particularly the NIST AI Risk Management Framework and ISO/IEC 42001, provide actionable blueprints for organizations at any stage of their governance journey.

As AI capabilities expand and societal expectations around responsible AI deployment intensify, those building governance as a dynamic capability will accelerate ahead, leveraging their governance infrastructure as a platform for rapid, responsible innovation.

FAQ

1. What are the four pillars of AI governance?

The four pillars of AI governance are: Transparency, Accountability, Fairness, Security and Data Integrity

- Transparency ensures AI decisions, data sources, and limitations are clearly explained.

- Accountability defines ownership for AI outcomes, monitoring, and issue resolution.

- Fairness prevents bias and discriminatory outcomes through ongoing evaluation.

- Security and Data Integrity protect AI systems and data from misuse, attacks, and privacy breaches.

2. What are the five core principles of AI?

The five core principles of AI are

- Inclusive Growth and Well being

- Sustainable Development

- Human Centered Values and Fairness

- Transparency and Explainability

- Robustness Security and Safety

These principles ensure AI benefits society broadly, minimizes environmental impact, respects human rights, remains understandable to stakeholders, and operates reliably under real world conditions.

3. What are the four capabilities of an AI system?

AI systems demonstrate four key capabilities: Learning, Reasoning, Interaction, Perception

- Learning allows AI to improve through data.

- Reasoning enables decision making and problem solving.

- Interaction supports communication with humans and systems.

- Perception allows interpretation of images, audio, and sensor data.

4. What are the key capabilities of AI governance?

Effective AI governance requires

- Risk Identification and Assessment

- Policy Development and Enforcement

- Monitoring and Auditing

- Incident Response and Learning

- Stakeholder Engagement and Communication

These capabilities ensure AI risks are identified early, rules are consistently applied, systems are continuously monitored, failures are addressed, and trust is maintained.

5. What are the five pillars of data governance?

The five pillars of data governance include

- Data Quality

- Data Privacy and Security

- Data Lifecycle Management

- Data Architecture and Integration

- Data Stewardship and Ownership

Strong data governance ensures AI systems are built on accurate, secure, traceable, and responsibly managed data.

6. What are three governing principles for AI system usage?

Three fundamental principles guide responsible AI usage

- Human Oversight and Control

- Purpose Limitation and Data Minimization

- Continuous Monitoring and Improvement

These principles ensure humans remain accountable, AI is used only for defined purposes, and systems are regularly reviewed as conditions change.

7. How should organizations apply AI governance in practice?

Organizations should invest in AI literacy, establish clear accountability, deploy monitoring tools, and encourage open discussion of AI risks.

Standards such as NIST AI RMF and ISO IEC 42001 provide practical frameworks to operationalize governance.

Strong AI governance enables scalable innovation, regulatory confidence, and long term competitive advantage.

Read more:

– Agentic AI In-Depth Report 2026: The Most Comprehensive Business Blueprint

– Machine Learning – ML vs Deep Learning: Understanding Excellent AI’s Core Technologies in 2026